Disclosure of Invention

In view of the above, an object of the present application is to provide an information retrieval method, an apparatus, an electronic device, and a storage medium, where the method determines a first word vector of a target vocabulary in a target query sentence and a central word vector of the target vocabulary in each target document based on a trained target information retrieval model, and determines a first relevancy between the query sentence and the target document according to the first word vector and the central word vector, and because the central word vector of the target vocabulary in the present application is determined based on a plurality of sliding windows in each target document, semantics and word senses of the target query sentence corresponding to the target vocabulary and the obtained retrieved documents are better matched, thereby improving accuracy of information retrieval.

The embodiment of the application provides an information retrieval method, which comprises the following steps:

inputting a target query sentence into a query layer in a trained target information retrieval model to obtain at least one target vocabulary which is the same between the target query sentence and each target document in a text library; wherein the target document is a document in the text base with the same vocabulary as the target query sentence;

inputting each obtained target vocabulary into a word vector extraction network layer in a trained target information retrieval model to obtain a first word vector of each target vocabulary in the target query sentence and a second word vector of each target vocabulary in a sliding window of each target document; the sliding window is a window which comprises a first preset number of adjacent characters in the target document and comprises at least one character in at least one target vocabulary; the two adjacent sliding windows contain the overlapping part of a second preset number of characters;

for any target vocabulary in each target document, determining a central word vector of the target vocabulary based on the second word vectors of the sliding windows related to the target vocabulary;

inputting a first word vector and a center word vector corresponding to a target word of each target document into a relevance hierarchy in a trained target information retrieval model, and calculating to obtain a first relevance between the target query statement and each target document;

and selecting a preset number of target documents as retrieval documents to be output according to the sequence of the relevance between the target query statement and the target documents from high to low.

Further, for any target word in each target document, determining a central word vector of the target word based on the second word vectors of the respective sliding windows associated with the target word, including:

aiming at any target vocabulary in each target document, acquiring a second word vector of the target vocabulary in each sliding window;

and performing summation calculation on each second word vector, performing average calculation after the summation calculation, and determining the average as the central word vector of the target vocabulary.

Further, a first degree of correlation between the target query statement and each of the target documents is calculated by:

performing point multiplication calculation on a first word vector corresponding to each target word and each central word vector corresponding to each target word, and determining a point multiplication result as a second degree of correlation between each target word and each target document;

and performing summation calculation on the second relevancy corresponding to the target vocabularies in each target document, and determining a summation result as the first relevancy between the target query statement and each target document.

Further, the performing a point multiplication calculation on the first word vector corresponding to each target word and each central word vector corresponding to each target word, and determining a point multiplication result as a second degree of correlation between each target word and each target document includes:

performing point multiplication calculation on a first word vector corresponding to each target word and each central word vector corresponding to each target word, and selecting a maximum point multiplication value as a result relevancy;

determining the result relevance as a second relevance between each of the target words and each of the target documents.

Further, determining a trained target information retrieval model in the following manner;

acquiring a sample query statement and a sample document corresponding to the sample query statement;

dividing the sample query sentence and the sample document according to a preset relevance, determining the sample query sentence and the sample document with the relevance more than or equal to the preset relevance as sample related texts, and determining the sample query sentence and the sample document with the relevance less than the preset relevance as sample unrelated texts;

and training an initial information retrieval model according to the sample related text and the sample unrelated text, and determining a trained target information retrieval model.

An embodiment of the present application further provides an information retrieval apparatus, where the information retrieval apparatus includes:

the first determination module is used for inputting a target query sentence into a query layer in a trained target information retrieval model to obtain at least one target vocabulary which is the same between the target query sentence and each target document in a text library; wherein the target document is a document in the text base with the same vocabulary as the target query sentence;

a second determining module, configured to input each obtained target word into a word vector extraction network layer in a trained target information retrieval model, to obtain a first word vector of each target word in the target query statement and a second word vector of each target word in a sliding window in each target document; the sliding window is a window which comprises a first preset number of adjacent characters in the target document and comprises at least one character in at least one target vocabulary; the two adjacent sliding windows contain the overlapping part of a second preset number of characters;

the third determining module is used for determining a central word vector of each target word in each target document based on the second word vectors of the sliding windows related to the target word;

the calculation module is used for inputting a first word vector and a center word vector corresponding to a target word of each target document into a relevance hierarchy in a trained target information retrieval model, and calculating to obtain a first relevance between the target query statement and each target document;

and the fourth determining module is used for selecting a preset number of target documents as retrieval documents to be output according to the sequence of the relevance between the target query statement and the target documents from high to low.

Further, the determining, by the third determining module, a central word vector of each target word in each target document based on the second word vectors of the respective sliding windows associated with the target word includes:

aiming at any target vocabulary in each target document, acquiring a second word vector of the target vocabulary in each sliding window;

and performing summation calculation on each second word vector, performing average calculation after the summation calculation, and determining the average as the central word vector of the target vocabulary.

Further, the calculation module calculates a first degree of correlation between the target query statement and each of the target documents by:

performing point multiplication calculation on a first word vector corresponding to each target word and each central word vector corresponding to each target word, and determining a point multiplication result as a second degree of correlation between each target word and each target document;

and performing summation calculation on the second relevancy corresponding to the target vocabularies in each target document, and determining a summation result as the first relevancy between the target query statement and each target document.

An embodiment of the present application further provides an electronic device, including: a processor, a memory and a bus, the memory storing machine-readable instructions executable by the processor, the processor and the memory communicating via the bus when the electronic device is operating, the machine-readable instructions when executed by the processor performing the steps of the information retrieval method as described above.

Embodiments of the present application further provide a computer-readable storage medium, on which a computer program is stored, where the computer program is executed by a processor to perform the steps of the information retrieval method as described above.

Compared with the prior art, the information retrieval method, the information retrieval device, the electronic equipment and the storage medium provided by the embodiment of the application are characterized in that a first word vector of a target vocabulary in a target query sentence and a central word vector of the target vocabulary in each target document are determined based on a trained target information retrieval model, and a first correlation degree between the query sentence and the target document is determined according to the first word vector and the central word vector.

In order to make the aforementioned objects, features and advantages of the present application more comprehensible, preferred embodiments accompanied with figures are described in detail below.

Detailed Description

In order to make the objects, technical solutions and advantages of the embodiments of the present application clearer, the technical solutions in the embodiments of the present application will be clearly and completely described below with reference to the drawings in the embodiments of the present application, and it is obvious that the described embodiments are only a part of the embodiments of the present application, and not all the embodiments. The components of the embodiments of the present application, generally described and illustrated in the figures herein, can be arranged and designed in a wide variety of different configurations. Thus, the following detailed description of the embodiments of the present application, presented in the accompanying drawings, is not intended to limit the scope of the claimed application, but is merely representative of selected embodiments of the application. Every other embodiment that can be obtained by a person skilled in the art without making creative efforts based on the embodiments of the present application falls within the protection scope of the present application.

Firstly, introduction is made to an application scenario applicable to the present application, and research finds that an information retrieval method in the prior art is mainly to retrieve a search text in a database based on a matching algorithm of word frequency, and the information retrieval method based on word frequency is still a mainstream method of a current retrieval system.

Based on this, the embodiment of the application provides an information retrieval method, an information retrieval device, an electronic device and a storage medium, based on a trained target information retrieval model, a first word vector of a target vocabulary in a target query sentence is determined, a central word vector of the target vocabulary in each target document is determined, and a first relevancy between the query sentence and the target document is determined according to the first word vector and the central word vector.

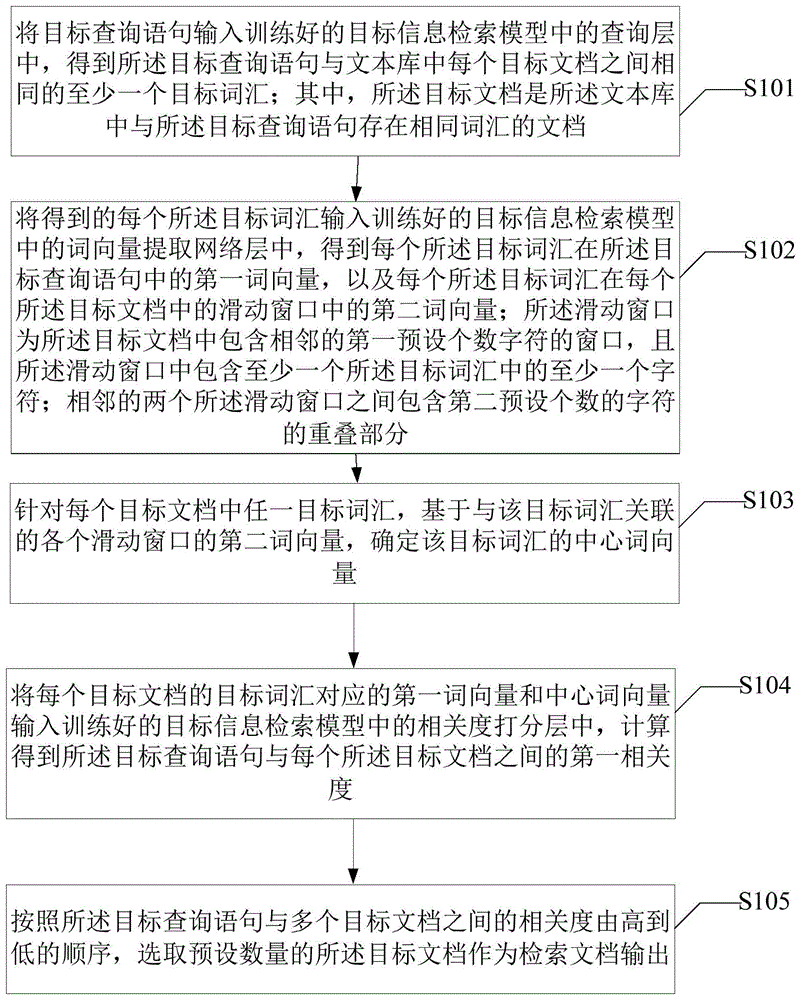

Referring to fig. 1, fig. 1 is a flowchart of an information retrieval method according to an embodiment of the present disclosure. As shown in fig. 1, an information retrieval method provided in an embodiment of the present application includes:

s101, inputting a target query sentence into a query layer in a trained target information retrieval model to obtain at least one target vocabulary which is the same between the target query sentence and each target document in a text library; and the target document is a document with the same vocabulary as the target query sentence in the text library.

In the step, after a user generates a series of retrieval documents corresponding to a target query sentence to be queried, the target query sentence is input into a query network layer in a trained target information retrieval model to obtain a target vocabulary between the target query sentence and each target document in a text library, wherein the documents in the text library are screened to remove the documents which are not related to the target query sentence, and the documents in the text library which have the same vocabulary as the target query sentence are screened out to be determined as the target documents.

In the embodiment provided by the application, the target vocabulary is expressed by q, wherein qi is expressed as the ith marked target vocabulary, and the query network layer is used for representing the network structure layer used for determining the target vocabulary in the target information retrieval model.

The target information retrieval model is obtained by training the initial information retrieval model, and the trained target information retrieval model is determined in the following mode;

and acquiring a sample query statement and a sample document corresponding to the sample query statement.

Firstly, obtaining an initial sample query statement and an initial sample document corresponding to the initial sample query statement, wherein the initial sample query statement is obtained from a log or other external sample databases, then manually marking the initial sample document corresponding to the initial sample query statement, carrying out denoising processing on the initial query statement and the initial sample document, and deleting meaningless special characters, such as spaces, messy codes and the like, wherein the characters are cleaned by using a regular expression.

The regular expression is a logic formula for operating on character strings, namely a 'regular character string' is formed by using a plurality of specific characters defined in advance and a combination of the specific characters, and the 'regular character string' is used for expressing a filtering logic for the character strings.

And carrying out relevance division on the sample query sentence and the sample document according to preset relevance, determining that the sample query sentence and the sample document with the relevance more than or equal to the preset relevance are sample related texts, and determining that the sample query sentence and the sample document with the relevance less than the preset relevance are sample unrelated texts.

The sample related text and the sample irrelevant text both comprise a sample training set and a sample verification set for training an initial information detection model, a python programming language is used for dividing the relevance between a sample query statement and a sample document according to the preset relevance, the sample query statement and the sample document with the relevance being more than or equal to the preset relevance are used as the sample related text, the sample related text is compared with the preset relevance by carrying out relevance labeling on the sample query statement and the sample document, the sample query statement and the sample document with the relevance being less than the preset relevance are used as the sample irrelevant text, and the sample query statement and the sample document with high predicted relevance obtained according to a probability retrieval algorithm are determined as difficult irrelevant samples.

Thus, the probabilistic search algorithm is an algorithm proposed based on a probabilistic search model, which includes, but is not limited to, the use of the BM25 information search algorithm.

And training the initial information retrieval model according to the sample training set and the sample verification set, and determining a trained target information retrieval model.

The initial information retrieval model is trained according to the sample training set, network structure parameters in the initial information retrieval model are updated and replaced in real time by using the sample verification set in the training process, further, the training effect of the initial information retrieval model is verified, and after the training is finished, model documents in the trained target information retrieval model are correspondingly stored, so that a subsequent information retrieval task or a reordering task of the target documents is facilitated.

The trained target information retrieval model can be applied to different online retrieval systems, and specifically, documents in a text library are processed offline by using a document encoder, so that preprocessing of the documents based on target query sentences is realized, and some noises in the documents are deleted; and then loading a trained target information retrieval model in a retrieval system, initializing the trained target information retrieval model, inputting the denoised target query sentences, and outputting a preset number of target documents corresponding to the target query sentences as retrieval documents.

Thirdly, the trained target information retrieval model can be used for recalling other documents outside the text base and reordering the other documents, and the specific process is as follows: firstly, loading a trained target information retrieval model, initializing the trained target information retrieval model, secondly, performing offline processing on documents in a text library by using a document encoder, then recalling target documents of other text libraries except the specified number of text libraries by using a BM25 information retrieval algorithm in a search engine, performing relevance matching on the target documents outside the recalled text libraries based on the initialized target information retrieval documents, and outputting a preset number of target documents as the recalled retrieval documents.

The method includes the steps of using an initial information retrieval model to encode a character string represented by a sample query statement and a character string represented by a sample document corresponding to the sample query statement respectively, wherein the initial information retrieval model in the embodiment provided by the application can be a language model based on BERT coding, but is not limited to use of the BERT coding, and using an Adam optimizer to fine tune a BERT coder, so that a function of adjusting parameters of the initial information retrieval model in a training process is realized.

Wherein, the initial information retrieval model can be trained by using a negative log-likelihood function to determine a trained target information retrieval model, the likelihood function is a function about parameters in the model, and the "likelihood (likelihood)" and "probability (probability)" are similar in meaning, but they have completely different meanings in statistics: the probabilities are used to predict the next observation with known parameters; the likelihoods are used to estimate the possible values of the parameters of a given model from some observations.

Here, Token is used to represent a string of character strings generated by the sample query statement and the sample document, as a Token requested by the client, after logging in for the first time, the server generates a Token to return the Token to the client, and the client only needs to take the Token to request data before later. In the embodiment provided by the present application, Token is represented as any one character or word in a sample query statement and a sample document, that is, each sample query statement and each sample document contain a plurality of tokens, and the number of tokens depends on the number of characters or words.

Here, the character string Token is specifically expressed as:

here, the first and second liquid crystal display panels are,

is a matrix, which maps nlm dimension output of the initial information retrieval model into low dimension nt vector, and the trained target information retrieval model is [ CLS ] in the added pre-training language model (BERT)]A matched information retrieval model, wherein the pre-training language model learns the deep bidirectional representation in the label-free data through pre-training, and [ CLS ] is added in the pre-training language model]The output character vector corresponding to the symbol is used as the semantic representation of the whole text or sentence.

Further, a standard sample query text and a standard sample document corresponding to the standard sample query text are obtained by the following method:

and acquiring an initial sample query text and an initial standard sample document corresponding to the initial sample query text.

And denoising the initial sample query text and the initial standard sample document to determine a standard sample query text and a standard sample document.

S102, inputting each obtained target vocabulary into a word vector extraction network layer in a trained target information retrieval model to obtain a first word vector of each target vocabulary in the target query statement and a second word vector of each target vocabulary in a sliding window of each target document; the sliding window is a window which comprises a first preset number of adjacent characters in the target document and comprises at least one character in at least one target vocabulary; and the adjacent two sliding windows contain the overlapping parts of a second preset number of characters.

Inputting each target vocabulary between a target query sentence and each target document in a text library into a word vector extraction network layer in a trained target information retrieval model to obtain a first word vector of the target vocabulary in the target query sentence and a second word vector of the target vocabulary in a sliding window of each target document, wherein the first word vector is specifically expressed based on Token's code as:

the english name of the target query statement is query, where the abbreviation q is used to denote,

a character string representing the ith labeled target query statement, b

tokIs a coefficient, W

tokCoefficients in the form of a matrix.

And wherein, need to consider the relevance of different target vocabularies in the target query statement, we utilize [ CLS ] matching in BERT to obtain the first word vector, and the specific expression of the first word vector at this time is:

here, Token-based encoding of the second word vector is specifically expressed as:

here, the english name of the target document is document, and in the formula, it is represented by the abbreviation d,

ith labeled target document string, b

tokAre coefficients.

And wherein, need to consider the relevance of different target vocabularies in the target query statement, we utilize [ CLS ] matching in BERT to obtain the second word vector, and the specific expression of the second word vector at this time is:

in the above-mentioned description,

and

the correlation degree between the words can provide high-level semantic matching information and relieve the problem of word mismatching.

Thus, the sliding window is a window that slides in a preset direction in each target document, and the sliding window includes a first preset number of characters, where the first preset number is not fixed, the user-defined number of characters can be set according to the expression characteristics of Chinese Hanzi, and each sliding window comprises at least one character in at least one target vocabulary, and the overlapping part of the second preset number of characters is included between two adjacent sliding windows, here, the embodiment section provided by the present application sets the overlapping part of the second preset number of characters as one character, that is, when the sliding window slides in a preset direction in any target document and when a target vocabulary appears in the sliding window, the next sliding window adjacent thereto is a sliding window separated from the above sliding window by one overlapping character.

As shown in fig. 2, fig. 2 is a structural diagram of a relationship between a sliding window and a target document in an information retrieval method according to an embodiment of the present application.

Wherein, the set sliding window includes four characters, that is, the first preset number of characters is four, for example: the target query statement provided by this embodiment is "pride behind", and one target document in the corresponding text library is "being a chinese, a girl got an olympic champion, i felt nobody pride and self-luxury".

Here, the target query sentence and the target vocabulary in the target document are only one, and the target vocabulary is specifically "pride", at this time, all the sliding windows in which any character "pride" or "pride" including the target vocabulary "pride" appears in the target vocabulary are obtained, and the second word vector based on the semantics of the target vocabulary in each sliding window is obtained by using the encoder, where the sliding window including the target vocabulary "pride" in fig. 2 is specifically represented as follows: "to pride", "pride and self".

If there are multiple target words in the target document, the process of setting a sliding window for each target word is the same as the above process, and is not described herein again.

S103, aiming at any target vocabulary in each target document, determining a central word vector of the target vocabulary based on the second word vectors of the sliding windows associated with the target vocabulary.

In the step, the second word vectors of each sliding window associated with any target vocabulary are subjected to summation average calculation, and the central word vector of the target vocabulary in the target document is determined.

Thus, the center word vector of the target vocabulary in the target document is denoted by CK.

S104, inputting the first word vector and the center word vector corresponding to the target vocabulary of each target document into a relevance hierarchy in a trained target information retrieval model, and calculating to obtain the first relevance between the target query statement and each target document.

In the step, after a first word vector and a center word vector corresponding to a target word of each target document are input into a relevance calculation network layer in a trained target information retrieval model, dot product calculation is carried out on the first word vector corresponding to each target word and each center word vector corresponding to each target word, the maximum dot product value is selected as the result relevance, and the dot product result is determined as a second relevance between each target word and each target document.

Here, the second relevancy of each target word in each target document is summed, and the summed result is determined as the first relevancy between the target query statement and each target document.

The expression for calculating the first correlation between the target query statement and each target document specifically includes:

in the formula, qiAnd e q # d represents that the ith marked target word is the same word shared by the target query statement and the target document, wherein the maximum point product value is selected, namely max operation is carried out to capture important semantic information of the target word in the target document.

In the above manner of calculating the first relevance between the target query statement and each of the target documents, which is not the [ CLS ] matching manner introduced into BERT, the following formula is an expression for calculating the first relevance to which the [ CLS ] matching manner is added:

in the formula, full represents the output of the correlation calculation network layer in the trained target information retrieval model with [ CLS ] matching added.

And S105, selecting a preset number of target documents as retrieval documents to be output according to the sequence from high to low of the correlation degree between the target query statement and the target documents.

In this step, when a user queries a target query statement, a preset number of target documents ranked from high to low according to the relevance are output as search documents by inputting the target query statement into a trained target information search model, and the search documents can be used as answer documents of the target query statement.

Compared with the information retrieval method in the prior art, the information retrieval method provided by the embodiment of the application is based on the trained target information retrieval model, the first word vector of the target vocabulary in the target query sentence is determined, the center word vector of the target vocabulary in each target document is determined, the first relevancy between the query sentence and the target document is determined according to the first word vector and the center word vector, and the center word vector of the target vocabulary in the information retrieval method is determined based on the plurality of sliding windows in each target document, so that the semantics and word senses of the target query sentence corresponding to the target vocabulary and the obtained retrieved document are matched better, and the accuracy of information retrieval is improved.

Referring to fig. 3, fig. 3 is a flowchart of an information retrieval method according to another embodiment of the present application. As shown in fig. 3, an information retrieval method provided in an embodiment of the present application includes:

s201, inputting a target query sentence into a query layer in a trained target information retrieval model to obtain at least one target vocabulary which is the same between the target query sentence and each target document in a text library; and the target document is a document with the same vocabulary as the target query sentence in the text library.

S202, inputting each obtained target vocabulary into a word vector extraction network layer in a trained target information retrieval model to obtain a first word vector of each target vocabulary in the target query statement and a second word vector of each target vocabulary in a sliding window of each target document; the sliding window is a window which comprises a first preset number of adjacent characters in the target document and comprises at least one character in at least one target vocabulary; and the adjacent two sliding windows contain the overlapping parts of a second preset number of characters.

S203, aiming at any target vocabulary in each target document, acquiring a second word vector of the target vocabulary in each sliding window.

In the step, for a plurality of target vocabularies in each target document, second word vectors of any target vocabulary in each sliding window are obtained.

Wherein, the window slides in each target document according to the preset direction, and the sliding window comprises a first preset number of characters, wherein, the setting of the first preset number is not fixed, the user-defined number of characters can be set according to the expression characteristics of Chinese Hanzi, and each sliding window comprises at least one character in at least one target vocabulary, and the overlapping part of the second preset number of characters is included between two adjacent sliding windows, here, the embodiment section provided by the present application sets the overlapping part of the second preset number of characters as one character, that is, when the sliding window slides in a preset direction in any target document and when a target vocabulary appears in the sliding window, the next sliding window adjacent thereto is a sliding window separated from the above sliding window by one overlapping character.

And S204, performing summation calculation on each second word vector, performing average calculation after the summation calculation, and determining the average as the central word vector of the target vocabulary.

In the step, the central word vector of each target vocabulary is obtained by summing the second word vectors of the sliding window and then averaging the sum.

S205, inputting the first word vector and the center word vector corresponding to the target vocabulary of each target document into a relevance hierarchy in a trained target information retrieval model, and calculating to obtain a first relevance between the target query statement and each target document.

S206, selecting a preset number of target documents as retrieval documents to be output according to the sequence from high to low of the correlation degree between the target query statement and the target documents.

The descriptions of S201 to S202 and S205 to S206 may refer to the descriptions of S101 to S102 and S104 to S105, and the same technical effects can be achieved, which is not described in detail herein.

Compared with the information retrieval method in the prior art, the information retrieval method provided by the embodiment of the application is based on the trained target information retrieval model, the first word vector of the target vocabulary in the target query sentence is determined, the center word vector of the target vocabulary in each target document is determined, the first relevancy between the query sentence and the target document is determined according to the first word vector and the center word vector, and the center word vector of the target vocabulary in the information retrieval method is determined based on the plurality of sliding windows in each target document, so that the semantics and word senses of the target query sentence corresponding to the target vocabulary and the obtained retrieved document are matched better, and the accuracy of information retrieval is improved.

Referring to fig. 4, fig. 4 is a schematic structural diagram of an information retrieval device according to an embodiment of the present disclosure. As shown in fig. 4, the information retrieval apparatus 400 includes:

a first determining module 410, configured to input a target query statement into a query layer in a trained target information retrieval model, to obtain at least one target vocabulary that is the same between the target query statement and each target document in a text base; and the target document is a document with the same vocabulary as the target query sentence in the text library.

A second determining module 420, configured to input each obtained target word into a word vector extraction network layer in a trained target information retrieval model, so as to obtain a first word vector of each target word in the target query statement and a second word vector of each target word in a sliding window in each target document; the sliding window is a window which comprises a first preset number of adjacent characters in the target document and comprises at least one character in at least one target vocabulary; and the adjacent two sliding windows contain the overlapping parts of a second preset number of characters.

And a third determining module 430, configured to determine, for any target word in each target document, a central word vector of the target word based on the second word vectors of the respective sliding windows associated with the target word.

Further, the third determining module determines, for any target word in each target document, a central word vector of the target word based on the second word vectors of the respective sliding windows associated with the target word, including:

and acquiring a second word vector of each target word in each sliding window aiming at any target word in each target document.

And performing summation calculation on each second word vector, performing average calculation after the summation calculation, and determining the average as the central word vector of the target vocabulary.

The calculating module 440 is configured to input the first word vector and the center word vector corresponding to the target vocabulary of each target document into a relevance hierarchy in a trained target information retrieval model, and calculate a first relevance between the target query statement and each target document.

Further, in the calculating module 440, a first degree of correlation between the target query statement and each of the target documents is calculated by:

and performing point multiplication calculation on the first word vector corresponding to each target word and each central word vector corresponding to each target word, and determining a point multiplication result as a second degree of correlation between each target word and each target document.

And performing summation calculation on the second relevancy corresponding to the target vocabularies in each target document, and determining a summation result as the first relevancy between the target query statement and each target document.

A fourth determining module 440, configured to select a preset number of target documents as search documents to be output according to a sequence that the relevance between the target query statement and the target documents is from high to low.

Compared with the prior art, the information retrieval apparatus 400 provided by the embodiment of the present application is characterized in that, based on a trained target information retrieval model, a first word vector of a target word in a target query sentence and a center word vector of the target word in each target document are determined, and a first relevancy between the query sentence and the target document is determined according to the first word vector and the center word vector.

Referring to fig. 5, fig. 5 is a schematic structural diagram of an electronic device according to an embodiment of the present disclosure. As shown in fig. 5, the electronic device 500 includes a processor 510, a memory 520, and a bus 530.

The memory 520 stores machine-readable instructions executable by the processor 510, when the electronic device 500 runs, the processor 510 communicates with the memory 520 through the bus 530, and when the machine-readable instructions are executed by the processor 510, the steps of the information retrieval method in the method embodiments shown in fig. 1 and fig. 4 may be executed.

An embodiment of the present application further provides a computer-readable storage medium, where a computer program is stored on the computer-readable storage medium, and when the computer program is executed by a processor, the steps of the information retrieval method in the method embodiments shown in fig. 1 and fig. 4 may be executed.

It is clear to those skilled in the art that, for convenience and brevity of description, the specific working processes of the above-described systems, apparatuses and units may refer to the corresponding processes in the foregoing method embodiments, and are not described herein again.

In the several embodiments provided in the present application, it should be understood that the disclosed system, apparatus and method may be implemented in other ways. The above-described embodiments of the apparatus are merely illustrative, and for example, the division of the units is only one logical division, and there may be other divisions when actually implemented, and for example, a plurality of units or components may be combined or integrated into another system, or some features may be omitted, or not executed. In addition, the shown or discussed mutual coupling or direct coupling or communication connection may be an indirect coupling or communication connection of devices or units through some communication interfaces, and may be in an electrical, mechanical or other form.

The units described as separate parts may or may not be physically separate, and parts displayed as units may or may not be physical units, may be located in one place, or may be distributed on a plurality of network units. Some or all of the units can be selected according to actual needs to achieve the purpose of the solution of the embodiment.

In addition, functional units in the embodiments of the present application may be integrated into one processing unit, or each unit may exist alone physically, or two or more units are integrated into one unit.

The functions, if implemented in the form of software functional units and sold or used as a stand-alone product, may be stored in a non-volatile computer-readable storage medium executable by a processor. Based on such understanding, the technical solution of the present application or portions thereof that substantially contribute to the prior art may be embodied in the form of a software product stored in a storage medium and including instructions for causing a computer device (which may be a personal computer, a server, or a network device) to execute all or part of the steps of the method according to the embodiments of the present application. And the aforementioned storage medium includes: various media capable of storing program codes, such as a usb disk, a removable hard disk, a Read-Only Memory (ROM), a Random Access Memory (RAM), a magnetic disk, or an optical disk.

Finally, it should be noted that: the above-mentioned embodiments are only specific embodiments of the present application, and are used for illustrating the technical solutions of the present application, but not limiting the same, and the scope of the present application is not limited thereto, and although the present application is described in detail with reference to the foregoing embodiments, those skilled in the art should understand that: any person skilled in the art can modify or easily conceive the technical solutions described in the foregoing embodiments or equivalent substitutes for some technical features within the technical scope disclosed in the present application; such modifications, changes or substitutions do not depart from the spirit and scope of the exemplary embodiments of the present application, and are intended to be covered by the scope of the present application. Therefore, the protection scope of the present application shall be subject to the protection scope of the claims.