CN115577112B - Event extraction method and system based on type perception gated attention mechanism - Google Patents

Event extraction method and system based on type perception gated attention mechanism Download PDFInfo

- Publication number

- CN115577112B CN115577112B CN202211576463.3A CN202211576463A CN115577112B CN 115577112 B CN115577112 B CN 115577112B CN 202211576463 A CN202211576463 A CN 202211576463A CN 115577112 B CN115577112 B CN 115577112B

- Authority

- CN

- China

- Prior art keywords

- event

- trigger word

- argument

- vector

- text

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F16/00—Information retrieval; Database structures therefor; File system structures therefor

- G06F16/30—Information retrieval; Database structures therefor; File system structures therefor of unstructured textual data

- G06F16/35—Clustering; Classification

- G06F16/353—Clustering; Classification into predefined classes

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F16/00—Information retrieval; Database structures therefor; File system structures therefor

- G06F16/30—Information retrieval; Database structures therefor; File system structures therefor of unstructured textual data

- G06F16/33—Querying

- G06F16/3331—Query processing

- G06F16/334—Query execution

- G06F16/3346—Query execution using probabilistic model

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02D—CLIMATE CHANGE MITIGATION TECHNOLOGIES IN INFORMATION AND COMMUNICATION TECHNOLOGIES [ICT], I.E. INFORMATION AND COMMUNICATION TECHNOLOGIES AIMING AT THE REDUCTION OF THEIR OWN ENERGY USE

- Y02D10/00—Energy efficient computing, e.g. low power processors, power management or thermal management

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- General Engineering & Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Computational Linguistics (AREA)

- Databases & Information Systems (AREA)

- Life Sciences & Earth Sciences (AREA)

- General Health & Medical Sciences (AREA)

- Probability & Statistics with Applications (AREA)

- Artificial Intelligence (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Evolutionary Computation (AREA)

- Health & Medical Sciences (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Machine Translation (AREA)

- Information Retrieval, Db Structures And Fs Structures Therefor (AREA)

Abstract

本发明涉及信息抽取技术领域,公开了一种基于类型感知门控注意力机制的事件抽取方法及系统,该事件抽取方法,利用门控信息指导,使不同事件类别下有不同信息流向触发词,过滤与触发词无关的噪声。本发明解决了现有技术存在的事件论元抽取准确度低、角色分类效果差等问题。

The invention relates to the technical field of information extraction, and discloses an event extraction method and system based on a type-aware gating attention mechanism. The event extraction method utilizes gating information guidance to make different information flow to trigger words under different event categories. Filter out noise not related to trigger words. The invention solves the problems of low event argument extraction accuracy, poor role classification effect and the like existing in the prior art.

Description

技术领域Technical Field

本发明涉及信息抽取技术领域,具体是一种基于类型感知门控注意力机制的事件抽取方法及系统。The present invention relates to the technical field of information extraction, and in particular to an event extraction method and system based on a type-aware gated attention mechanism.

背景技术Background Art

事件抽取是信息抽取领域中既基础又极具挑战的任务。事件抽取通常包括两个任务,即事件检测与事件论元抽取。更具体地,事件检测任务又包括触发词检测和事件分类两个子任务,事件论元抽取又包括论元检测和角色分类两个子任务。近年来,随着深度学习的不断发展,基于深度学习的事件抽取方法取得了一定程度上的提升,但是事件抽取的难点依然还未被完全解决。Event extraction is a basic and challenging task in the field of information extraction. Event extraction usually includes two tasks, namely event detection and event argument extraction. More specifically, the event detection task includes two subtasks: trigger word detection and event classification, and event argument extraction includes two subtasks: argument detection and role classification. In recent years, with the continuous development of deep learning, event extraction methods based on deep learning have achieved a certain degree of improvement, but the difficulty of event extraction has not yet been completely solved.

现阶段,大多数事件抽取方法都集中在解决重叠论元场景,却忽视了重叠触发词场景和触发词歧义问题。换句话说,不止论元可能在不同/同一事件中扮演不同的角色,触发词也可能有多种事件类型。At present, most event extraction methods focus on solving overlapping argument scenarios, but ignore overlapping trigger word scenarios and trigger word ambiguity. In other words, not only arguments may play different roles in different/same events, but trigger words may also have multiple event types.

此外,相比于事件检测,事件论元抽取更加困难。许多方法尝试利用角色信息提升事件论元抽取效果,比如角色出现频率(重要性)、角色相关性如层次概念关系、角色语法关系等。角色出现频率忽略了角色之间的相互关系,其他角色相关性则需要基于人的经验进行总结和归纳,并且在某些数据上并不适用,所以对事件论元抽取效果的提升不大。In addition, event argument extraction is more difficult than event detection. Many methods try to use role information to improve event argument extraction, such as role frequency (importance), role relevance such as hierarchical concept relationship, role grammatical relationship, etc. Role frequency ignores the relationship between roles, and other role relevance needs to be summarized and generalized based on human experience, and is not applicable to some data, so it does not significantly improve the effect of event argument extraction.

发明内容Summary of the invention

为克服现有技术的不足,本发明提供了一种基于类型感知门控注意力机制的事件抽取方法及系统,解决现有技术存在的事件论元抽取准确度低、角色分类效果差等问题。In order to overcome the shortcomings of the prior art, the present invention provides an event extraction method and system based on a type-aware gated attention mechanism to solve the problems of low accuracy in event argument extraction and poor role classification effect existing in the prior art.

本发明解决上述问题所采用的技术方案是:The technical solution adopted by the present invention to solve the above problems is:

一种基于类型感知门控注意力机制的事件抽取方法,利用门控信息指导,使不同事件类别下有不同信息流向触发词,过滤与触发词无关的噪声。An event extraction method based on type-aware gated attention mechanism uses gated information guidance to make different information flows to trigger words under different event categories and filter out noise unrelated to the trigger words.

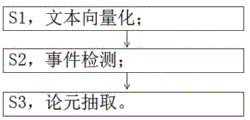

作为一种优选的技术方案,包括以下步骤:As a preferred technical solution, the following steps are included:

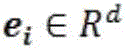

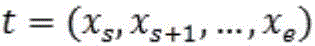

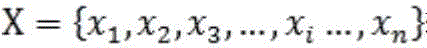

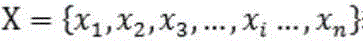

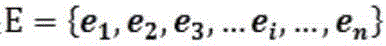

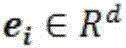

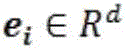

S1,文本向量化:将样本输入到基于语言模型的文本向量化层当中,获得文本向量化结果;其中,表示文本中的第个字,表示对应的向量化结果,表示文本X的向量化结果,R表示实数,d表示向量的维度,Rd表示d维实数向量;S1, text vectorization: transform the sample Input into the text vectorization layer based on the language model to obtain the text vectorization result ;in, Indicates the first Words, express The corresponding vectorized result is, Represents the vectorization result of the text X, R represents a real number, d represents the dimension of the vector, and R d represents a d-dimensional real number vector;

S2,事件检测:将文本向量化结果输入融合类型感知的门控注意力机制的事件检测模块,以完成触发词检测和事件分类两个子任务;S2, event detection: vectorizing text The input is an event detection module that integrates type-aware gated attention mechanism to complete the two subtasks of trigger word detection and event classification.

S3,论元抽取:对步骤S2完成触发词检测和事件分类后的结果中每种事件类型下的每一个触发词,利用融合了可学习的角色交互参数的论元抽取模块完成论元抽取和论元角色分类两个子任务。S3, argument extraction: For each trigger word under each event type in the results of trigger word detection and event classification in step S2, the argument extraction module that integrates learnable role interaction parameters is used to complete the two subtasks of argument extraction and argument role classification.

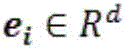

作为一种优选的技术方案,步骤S2中融合类型感知门控注意力机制的事件检测模块包括串联的如下子模块:触发词提取层、门控注意力事件分类层。As a preferred technical solution, the event detection module integrating the type-aware gated attention mechanism in step S2 includes the following sub-modules connected in series: a trigger word extraction layer and a gated attention event classification layer.

作为一种优选的技术方案,触发词提取层的构建过程包括如下步骤:As a preferred technical solution, the construction process of the trigger word extraction layer includes the following steps:

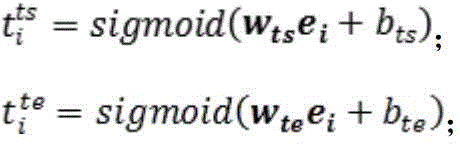

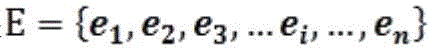

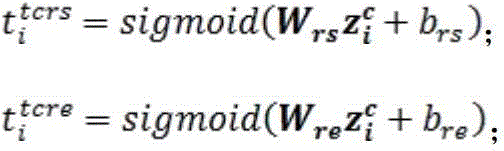

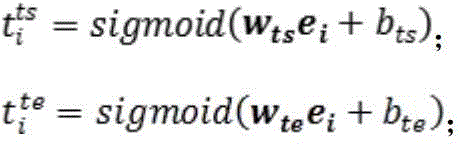

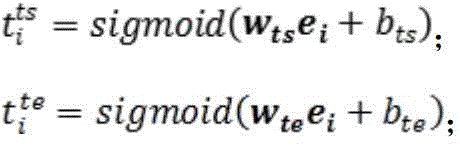

S21,首先按照如下公式计算获得输入文本中每个字为触发词开始/结束字符的概率:S21, first calculate the probability of each character in the input text being the start/end character of the trigger word according to the following formula:

其中,为可学习的网络参数,sigmoid为激活函数,为输入文本中第个字是触发词的开始字符的概率,为文本中第个字是触发词的结束字符的概率;in, is a learnable network parameter, sigmoid is the activation function, For the first The probability that the character is the starting character of the trigger word, For the text The probability that the character is the end character of the trigger word;

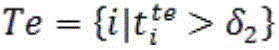

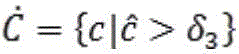

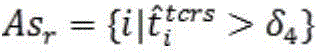

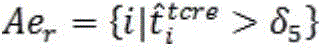

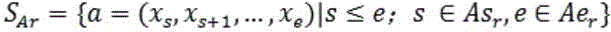

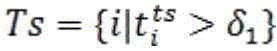

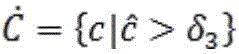

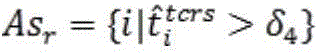

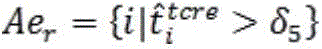

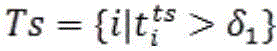

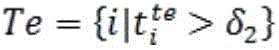

S22,根据预先设定的阈值、对S21中的结果进行过滤,从而获得位置集合、:S22, according to the preset threshold , Filter the results in S21 to obtain a location set , :

; ;

; ;

其中,表示触发词的开始字符位置集合,表示触发词的结束字符位置集合;in, Indicates the starting character position set of the trigger word, Indicates the ending character position set of the trigger word;

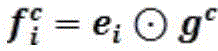

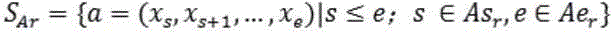

S23,结合步骤S22的结果,利用最近匹配原则获得触发词集合;S23, combining the result of step S22, using the nearest match principle to obtain a trigger word set ;

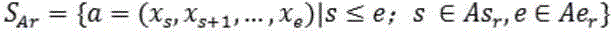

其中,t为候选触发词,s为候选触发词t的开始字符在文本X中的位置,为集合中最靠近的元素;Where t is the candidate trigger word, s is the position of the starting character of the candidate trigger word t in the text X, For collection The closest Elements of

门控注意力事件分类层的构建过程包括如下步骤:The construction process of the gated attention event classification layer includes the following steps:

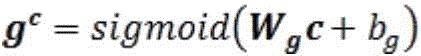

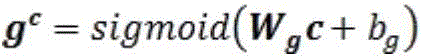

S24,在门控信息过滤层中,对每个事件类别,定义事件类别语义向量,按如下公式计算相应门控向量:S24, in the gated information filtering layer, for each event category , define the event category semantic vector , calculate the corresponding gate vector according to the following formula:

; ;

其中,为事件类别下的门控向量,为门控单元的可学习权重参数,为门控单元的可学习偏置参数;in, For event category The gating vector under is the learnable weight parameter of the gating unit, is the learnable bias parameter of the gating unit;

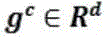

S25,结合S24中的结果,在每个事件类别下,利用元素积函数过滤上下文信息:S25, combined with the results in S24, uses the element-wise product function to filter the context information under each event category:

; ;

其中,为输入文本中第个字对应的向量,为经过门控信息过滤层后输入文本中第个字在事件类别下经过信息过滤后的对应的向量;in, For the first The vector corresponding to the word, is the first Words in event category The corresponding vector after information filtering;

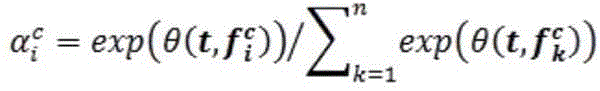

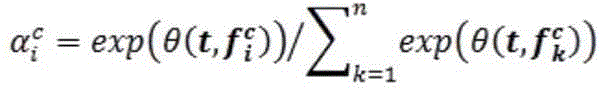

S26,在注意力信息融合层中,利用注意力计算函数获得在事件类别下输入文本中第个字对于触发词的重要性分数;S26, in the attention information fusion layer, the attention calculation function is used to obtain the event category Enter the text below Trigger Word Importance score ;

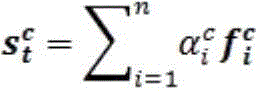

S27,结合S26中计算所获重要性分数,利用如下公式在每个事件类别下获得与每个触发词相关的最终信息聚合结果:S27, combined with the importance scores calculated in S26, uses the following formula to obtain the final information aggregation result related to each trigger word under each event category:

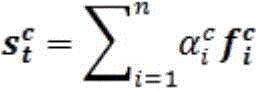

; ;

其中,为经过注意力信息融合层后事件类别下与触发词t相关的信息聚合向量;in, is the event category after the attention information fusion layer The information aggregation vector related to the trigger word t is as follows;

S28,在事件分类层中,结合步骤S27中所得的与触发词t相关的信息聚合向量,利用如下公式判定触发词t所属于的事件类型:S28, in the event classification layer, combined with the information aggregation vector related to the trigger word t obtained in step S27, the event type to which the trigger word t belongs is determined using the following formula:

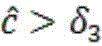

; ;

其中,为事件类别判定单元的可学习权重参数,为事件类别判定单元的偏置参数,wT表示w的转置;in, is the learnable weight parameter of the event category determination unit, is the bias parameter of the event category determination unit, w T represents the transpose of w;

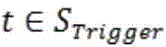

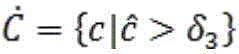

根据预先设定的阈值,所有满足如下条件的事件类别均会被判定为触发词t所属事件类别:According to the pre-set threshold , all event categories that meet the following conditions They will all be judged as the event category to which the trigger word t belongs:

; ;

最终每个触发词t的事件类别集合为。Finally, the event category set of each trigger word t is .

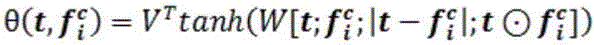

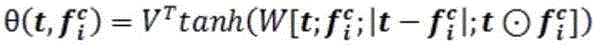

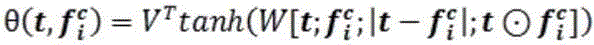

作为一种优选的技术方案,步骤S26中,注意力计算函数公式如下:As a preferred technical solution, in step S26, the attention calculation function formula is as follows:

; ;

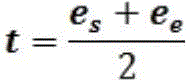

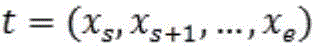

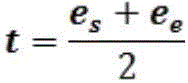

其中,为触发词的表征向量,通过如下公式计算获得:in, Trigger word The characterization vector of is calculated by the following formula:

; ;

其中,表示触发词t的开始字符的表征向量,表示触发词t的结束字符的表征向量;in, Represents the representation vector of the starting character of the trigger word t, The representation vector representing the end character of the trigger word t;

的定义如下: is defined as follows:

; ;

其中,表示tanh激活函数,VT表示V的转置,表示权重,[;;]表示向量的拼接。in, represents the tanh activation function, V T represents the transpose of V, represents weight, and [;;] represents the concatenation of vectors.

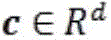

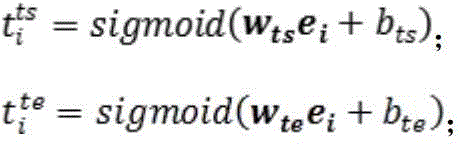

作为一种优选的技术方案,步骤S3所述融合了可学习的角色交互参数的论元抽取模块的构建过程包括如下步骤:As a preferred technical solution, the construction process of the argument extraction module integrating learnable role interaction parameters described in step S3 includes the following steps:

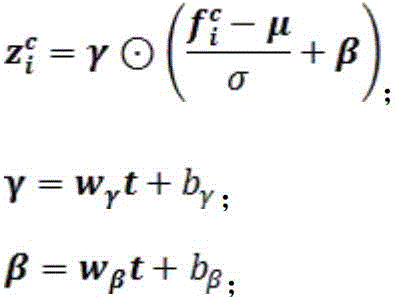

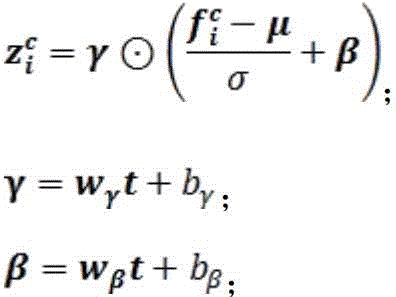

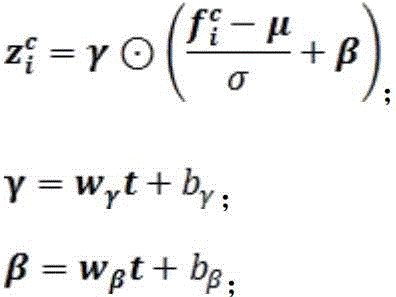

S31,利用如下公式计算上下文中融入触发词表征向量:S31, use the following formula to calculate the trigger word representation vector integrated into the context :

其中,是基于输入文本在事件类别下经过信息过滤后的对应向量计算所得的均值,是基于输入文本在事件类别下经过信息过滤后的对应向量计算所得的标准方差,表示输入文本中第个字在事件类别下经过信息过滤并融合触发词t信息后的向量,、分别表示扩展参数和平移参数,、分别表示用于计算的线性层的权重参数、偏置参数,、则分别表示用于计算的线性层的权重参数、偏置参数;in, is based on the input text in the event category The corresponding vector after information filtering is The calculated mean is, is based on the input text in the event category The corresponding vector after information filtering is The calculated standard deviation is Indicates the first Words in event category The following is the vector after information filtering and fusion of trigger word t information, , They represent the expansion parameters and translation parameters respectively. , Respectively represent the calculation The weight parameters and bias parameters of the linear layer, , They are used to calculate The weight parameters and bias parameters of the linear layer;

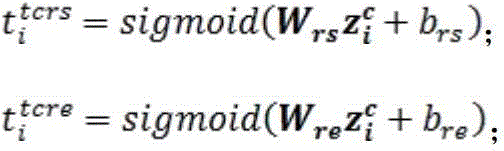

S32,利用如下公式分别计算输入文本中每个字作为每种角色事件类别下论元的开始字符/结束字符的概率大小:S32, using the following formula to calculate each word in the input text as each role event category The probability of the start character/end character of the following argument:

其中,表示输入文本第个字是事件类别为的触发词t的角色下的论元的开始字符的概率值,表示输入文本第个字是事件类别为的触发词t的角色下的论元的结束字符的概率值,、表示权重参数,、表示偏置参数;in, Indicates the input text The event category is The role of the trigger word t The probability value of the starting character of the argument below, Indicates the input text The event category is The role of the trigger word t The probability value of the end character of the argument below, , represents the weight parameter, , represents the bias parameter;

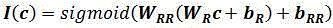

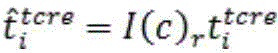

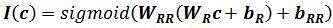

S33,定义可学习的角色交互矩阵,并设计如下判定函数:S33, define a learnable role interaction matrix , and design the following judgment function:

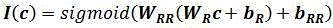

; ;

其中,表示事件类别下的指示函数,、表示第一层线性层的权重参数与偏置参数,表示第二层线性层的偏置参数;in, Indicates event category The indicator function below, , Represents the weight parameters and bias parameters of the first linear layer, Represents the bias parameter of the second linear layer;

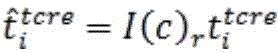

将作为权重,结合该权重修正步骤S32中的计算结果:Will As a weight, the calculation result in step S32 is corrected in combination with the weight:

; ;

; ;

经过训练,判定函数不仅可以学到事件角色之间的相互关系,同时也学到了角色之间的相互关系;After training, the decision function can not only learn the relationship between event roles, but also the relationship between roles;

其中,为输入文本第个字是事件类别为的触发词t的角色下的论元的开始字符的最终概率值,为输入文本第个字是事件类别为的触发词t的角色下的论元的结束字符的最终概率值,为事件类别下角色的权重;in, For input text The event category is The role of the trigger word t The final probability value of the starting character of the argument under For input text The event category is The role of the trigger word t The final probability value of the end character of the argument under For event category Next role The weight of

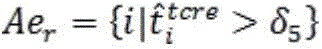

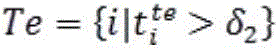

S34,根据预先设定的阈值、对S33中的结果进行过滤,从而获得位置集合、:S34, according to a preset threshold , Filter the results in S33 to obtain a location set , :

; ;

; ;

其中,表示角色下论元的开始字符位置集合,表示角色下论元的结束字符位置集合;in, Representing roles The set of character positions of the next argument, Representing roles The set of ending character positions of the next argument;

S35,结合步骤S34的结果,利用最近匹配原则获得角色下的论元集合;其中,为论元的开始字符在文本X中的位置,为集合中最靠近的元素。S35, combining the result of step S34, using the closest matching principle to obtain the role The argument set ;in, Argument The position of the starting character in text X, For collection The closest elements.

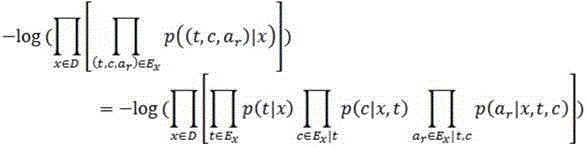

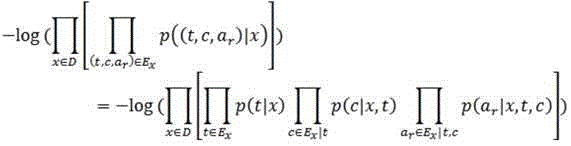

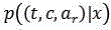

作为一种优选的技术方案,该事件抽取方法的损失函数如下:As a preferred technical solution, the loss function of the event extraction method is as follows:

; ;

其中,表示输入文本中触发词t的事件类型,表示输入文本中事件类型为c的触发词t的角色为的论元,D表示所有输入样本,表示样本x中触发词为t且事件类型为c且角色r的论元为的概率,表示样本x中触发词为t的概率,表示样本x中触发词的事件类型为c的概率,表示在样本x中事件类别为c且触发词为时角色r的论元为的概率,表示样本x中的所有事件。in, Represents input text The event type of the trigger word t in Represents input text The role of the trigger word t with event type c is The argument of , D represents all input samples, Indicates that the trigger word in sample x is t, the event type is c, and the argument of role r is The probability of represents the probability that the trigger word in sample x is t, Represents the trigger word in sample x The probability that the event type is c, Indicates that in sample x, the event category is c and the trigger word is When the argument of role r is The probability of represents all events in sample x.

作为一种优选的技术方案,步骤S1中,语言模型为BERT模型。As a preferred technical solution, in step S1, the language model is a BERT model.

文本向量化模块:用以,将样本输入到基于语言模型的文本向量化层当中,获得文本向量化结果;其中,表示文本中的第个字,表示对应的向量化结果,表示文本X的向量化结果,R表示实数,d表示向量的维度,Rd表示d维实数向量;Text vectorization module: used to convert samples Input into the text vectorization layer based on the language model to obtain the text vectorization result ;in, Indicates the first Words, express The corresponding vectorized result is, Represents the vectorization result of the text X, R represents a real number, d represents the dimension of the vector, and R d represents a d-dimensional real number vector;

事件检测模块:用以,将文本向量化结果输入融合类型感知的门控注意力机制的事件检测模块,以完成触发词检测和事件分类两个子任务;Event detection module: used to vectorize text results The input is an event detection module that integrates type-aware gated attention mechanism to complete the two subtasks of trigger word detection and event classification.

论元抽取模块:用以,对事件检测模块完成触发词检测和事件分类后的结果中每种事件类型下的每一个触发词,利用融合了可学习的角色交互参数的论元抽取模块完成论元抽取和论元角色分类两个子任务。Argument extraction module: It is used to complete the two subtasks of argument extraction and argument role classification for each trigger word under each event type in the results of trigger word detection and event classification completed by the event detection module, using the argument extraction module that integrates learnable role interaction parameters.

本发明相比于现有技术,具有以下有益效果:Compared with the prior art, the present invention has the following beneficial effects:

本发明利用门控信息指导,使得不同事件类别下有不同信息流向触发词,有效地过滤与触发词无关的噪声并有效地整合其他相关信息,从而更好地消除含重叠触发词的事件抽取场景下的触发词的歧义问题,应对重叠触发词场景,提升事件分类效果;同时,该方法考虑了被大家忽视的角色共现关系,通过引入可学习的角色交互参数建模该角色共现关系,进一步提升了论元抽取和角色分类任务的效果。The present invention utilizes gated information guidance to enable different information flows to trigger words under different event categories, effectively filters out noise unrelated to the trigger words and effectively integrates other relevant information, thereby better eliminating the ambiguity of trigger words in event extraction scenarios containing overlapping trigger words, coping with overlapping trigger word scenarios, and improving event classification effects; at the same time, the method takes into account the neglected role co-occurrence relationship, and introduces learnable role interaction parameters to model the role co-occurrence relationship, further improving the effects of argument extraction and role classification tasks.

附图说明BRIEF DESCRIPTION OF THE DRAWINGS

图1为本发明所述的一种基于类型感知门控注意力机制的事件抽取方法的步骤示意图;FIG1 is a schematic diagram of the steps of an event extraction method based on a type-aware gated attention mechanism according to the present invention;

图2为本发明具体实施方式中融合类型感知门控注意力机制的事件检测模块的模型结构示意图。FIG2 is a schematic diagram of the model structure of an event detection module integrating a type-aware gated attention mechanism in a specific implementation of the present invention.

具体实施方式DETAILED DESCRIPTION

下面结合实施例及附图,对本发明作进一步的详细说明,但本发明的实施方式不限于此。The present invention will be further described in detail below in conjunction with embodiments and drawings, but the embodiments of the present invention are not limited thereto.

本发明提出一种基于类型感知门控注意力机制的事件抽取方法,该方法设计了类型感知的门控注意力机制,区别于纯粹的注意力机制,该方法利用门控信息指导,使得不同事件类别下有不同信息流向触发词,有效地过滤与触发词无关的噪声并有效地整合其他相关信息,从而更好地消除含重叠触发词的事件抽取场景下的触发词的歧义问题,应对重叠触发词场景,提升事件分类效果;同时,该方法考虑了被大家忽视的角色共现关系,通过引入可学习的角色交互参数建模该角色共现关系,进一步提升了论元抽取和角色分类任务的效果。The present invention proposes an event extraction method based on a type-aware gated attention mechanism. The method designs a type-aware gated attention mechanism. Different from a pure attention mechanism, the method uses gated information guidance to enable different information flows to trigger words under different event categories, effectively filters noise irrelevant to the trigger words and effectively integrates other relevant information, thereby better eliminating the ambiguity of trigger words in event extraction scenarios containing overlapping trigger words, coping with overlapping trigger word scenarios, and improving event classification effects; at the same time, the method takes into account the role co-occurrence relationship that has been neglected by everyone, and models the role co-occurrence relationship by introducing learnable role interaction parameters, thereby further improving the effects of argument extraction and role classification tasks.

实施例1Example 1

如图1所示,一种基于类型感知门控注意力机制的事件抽取方法,包括步骤:As shown in FIG1 , an event extraction method based on a type-aware gated attention mechanism includes the following steps:

S1,将样本输入到基于语言模型如BERT的文本向量化层当中,获得文本向量化结果。其中表示文本中的第个字,为其对应的向量化结果;S1, the sample Input into the text vectorization layer based on language models such as BERT to obtain the text vectorization result .in Indicates the first Words, is the corresponding vectorized result;

S2,将文本向量化结果输入融合类型感知的门控注意力机制的事件检测模块以完成触发词检测和事件分类两个子任务;S2, vectorize the text The input is an event detection module that integrates type-aware gated attention mechanism to complete the two subtasks of trigger word detection and event classification.

S3,对S2结果中属于每种事件类型的每一个触发词,利用融合了可学习的角色交互参数的论元抽取模块完成论元抽取和论元角色分类两个子任务;S3, for each trigger word belonging to each event type in the results of S2, use the argument extraction module that incorporates learnable role interaction parameters to complete the two subtasks of argument extraction and argument role classification;

实施例2Example 2

在实施例1的基础上,如图2所示,步骤S2中融合类型感知门控注意力机制的事件检测模块按串联顺序包括如下子模块:触发词提取层、门控注意力事件分类层。Based on Example 1, as shown in Figure 2, the event detection module integrating the type-aware gated attention mechanism in step S2 includes the following sub-modules in series order: a trigger word extraction layer and a gated attention event classification layer.

触发词提取层的构建过程包括如下步骤:The construction process of the trigger word extraction layer includes the following steps:

S21,首先按照如下公式计算获得输入文本中每个字为触发词开始/结束字符的概率:S21, first calculate the probability of each character in the input text being the start/end character of the trigger word according to the following formula:

其中,为可学习的网络参数,sigmoid为激活函数。对应输入文本中第个字是触发词的开始字符的概率,则对应文本中第个字是触发词的结束字符的概率;in, is a learnable network parameter and sigmoid is the activation function. Corresponding to the first The probability that the character is the starting character of the trigger word, The corresponding text The probability that the character is the end character of the trigger word;

S22,根据预先设定的阈值、对S21中的结果进行过滤,从而获得位置集合、:S22, according to the preset threshold , Filter the results in S21 to obtain a location set , :

这里,位置集合、分别表示触发词的开始字符位置集合、结束字符位置集合;Here, the location set , Respectively represent the starting character position set and the ending character position set of the trigger word;

S23,结合步骤S22的结果,利用最近匹配原则获得触发词集合,这里为候选触发词t的开始字符在文本X中的位置,为集合中最靠近的元素。S23, combining the result of step S22, using the nearest match principle to obtain a trigger word set ,here is the position of the starting character of the candidate trigger word t in the text X, For collection The closest elements.

门控注意力事件分类层的构建过程包括如下步骤:The construction process of the gated attention event classification layer includes the following steps:

S24,在门控信息过滤层中,对每个事件类别,定义事件类别语义向量,按如下公式计算相应门控向量:S24, in the gated information filtering layer, for each event category , define the event category semantic vector , calculate the corresponding gate vector according to the following formula:

这里为事件类别下的门控向量,为门控单元的可学习权重参数,为门控单元的可学习偏置参数;here For event category The gating vector under is the learnable weight parameter of the gating unit, is the learnable bias parameter of the gating unit;

S25,结合S24中的结果,在每个事件类别下,利用元素积函数(element-wiseproduct)过滤上下文信息:S25, combined with the results in S24, uses the element-wise product function to filter the context information under each event category:

这里,为输入文本中第个字对应向量,为经过门控信息过滤层后输入文本中第个字在事件类别下经过信息过滤后的对应向量;here, For the first The word corresponds to the vector, is the first Words in event category The corresponding vector after information filtering is as follows;

S26,在注意力信息融合层中,经过S25步骤所述运算后,利用如下设计的注意力计算函数获得在事件类别下输入文本中第个字对于触发词的重要性分数:S26, in the attention information fusion layer, after the operation described in step S25, the attention calculation function designed as follows is used to obtain the event category Enter the text below Trigger Word Importance score :

这里,为触发词的表征向量,通过如下公式计算获得:here, Trigger word The characterization vector of is calculated by the following formula:

此外,的定义如下:also, is defined as follows:

; ;

S27,结合S26中计算所获重要性分数,利用如下公式在每个事件类别下获得与每个触发词相关的最终信息聚合结果:S27, combined with the importance scores calculated in S26, uses the following formula to obtain the final information aggregation result related to each trigger word under each event category:

这里,为经过注意力信息融合层后事件类别下与触发词相关的信息聚合向量;here, is the event category after the attention information fusion layer Next and trigger words Related information aggregation vector;

S28,在事件分类层中,结合步骤S27中所得的与触发词t相关的信息聚合向量,利用如下公式判定触发词t属于哪些事件类型:S28, in the event classification layer, combined with the information aggregation vector related to the trigger word t obtained in step S27, the following formula is used to determine which event type the trigger word t belongs to:

这里,为事件类别判定单元的可学习权重参数,为事件类别判定单元的偏置参数,wT表示w的转置;具体地,根据预先设定的阈值,所有满足如下条件的事件类别均会被判定为触发词t所属事件类别:here, is the learnable weight parameter of the event category determination unit, is the bias parameter of the event category determination unit, w T represents the transposition of w; specifically, according to the preset threshold , all event categories that meet the following conditions They will all be judged as the event category to which the trigger word t belongs:

即最终每个触发词t的事件类别集合为。That is, the final event category set of each trigger word t is .

实施例3Example 3

在实施例1、2的基础上,步骤S3所述融合了可学习的角色交互参数的论元抽取模块的构建过程包括如下步骤:On the basis of embodiments 1 and 2, the construction process of the argument extraction module integrating the learnable role interaction parameters in step S3 includes the following steps:

S31,为了更好地抽取事件类型为的给定触发词t相关的所有论元,首先利用如下公式在上下文中融入触发词表征向量:S31, in order to better extract the event type Given all the arguments related to the trigger word t, first use the following formula to integrate the trigger word representation vector into the context :

这里,、是基于输入文本在事件类别下经过信息过滤后的对应向量计算所得的均值与标准方差;here, , is based on the input text in the event category The corresponding vector after information filtering is Calculated mean and standard deviation;

S32,利用如下公式分别计算输入文本中每个字作为每种角色事件类别下论元的开始字符/结束字符的概率大小:S32, using the following formula to calculate each word in the input text as each role event category The probability of the start character/end character of the following argument:

这里,、分别表示输入文本第个字是事件类别为的触发词t的角色下的论元的开始字符/结束字符的概率值;here, , Respectively represent the input text The event category is The role of the trigger word t The probability value of the start character/end character of the argument below;

S33,定义可学习的角色交互矩阵,并设计如下判定函数:S33, define a learnable role interaction matrix , and design the following judgment function:

将其计算结果作为权重,结合该权重修正步骤S32中的计算结果:The calculation result is used as the weight, and the calculation result in step S32 is corrected in combination with the weight:

; ;

; ;

经过训练,判定函数不仅可以学到事件角色之间的相互关系,同时也学到了角色之间的相互关系。、为输入文本第个字是事件类别为的触发词t的角色下的论元的开始字符/结束字符的最终概率值。这里为事件类别下角色的权重。After training, the decision function can not only learn the relationship between event roles, but also the relationship between roles. , For input text The event category is The role of the trigger word t The final probability value of the start character/end character of the argument below. Here For event category Next role The weight of .

S34,根据预先设定的阈值、对S33中的结果进行过滤,从而获得位置集合、:S34, according to a preset threshold , Filter the results in S33 to obtain a location set , :

; ;

; ;

这里,位置集合、分别表示角色下论元的开始字符位置集合、结束字符位置集合;Here, the location set , Represents roles The starting character position set and the ending character position set of the next argument;

S35,结合步骤S34的结果,利用最近匹配原则获得角色下的论元集合,这里为论元的开始字符在文本X中的位置,为集合中最靠近的元素。S35, combining the result of step S34, using the closest matching principle to obtain the role The argument set ,here Argument The position of the starting character in text X, For collection The closest elements.

实施例4Example 4

在实施例1、2、3的基础上,该事件抽取方法的损失函数如下:Based on Examples 1, 2, and 3, the loss function of the event extraction method is as follows:

这里,表示输入文本中触发词t的事件类型,表示输入文本中事件类型为的触发词t的角色为的论元。here, Represents input text The event type of the trigger word t in Represents input text The event type is The role of the trigger word t is The argument of .

如上所述,可较好地实现本发明。As described above, the present invention can be preferably implemented.

本说明书中所有实施例公开的所有特征,或隐含公开的所有方法或过程中的步骤,除了互相排斥的特征和/或步骤以外,均可以以任何方式组合和/或扩展、替换。All features disclosed in all embodiments in this specification, or steps in all methods or processes implicitly disclosed, except for mutually exclusive features and/or steps, can be combined and/or expanded or replaced in any manner.

以上所述,仅是本发明的较佳实施例而已,并非对本发明作任何形式上的限制,依据本发明的技术实质,在本发明的精神和原则之内,对以上实施例所作的任何简单的修改、等同替换与改进等,均仍属于本发明技术方案的保护范围之内。The above description is only a preferred embodiment of the present invention and does not limit the present invention in any form. According to the technical essence of the present invention, within the spirit and principles of the present invention, any simple modification, equivalent replacement and improvement made to the above embodiment still falls within the protection scope of the technical solution of the present invention.

Claims (6)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202211576463.3A CN115577112B (en) | 2022-12-09 | 2022-12-09 | Event extraction method and system based on type perception gated attention mechanism |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202211576463.3A CN115577112B (en) | 2022-12-09 | 2022-12-09 | Event extraction method and system based on type perception gated attention mechanism |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN115577112A CN115577112A (en) | 2023-01-06 |

| CN115577112B true CN115577112B (en) | 2023-04-18 |

Family

ID=84589980

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202211576463.3A Active CN115577112B (en) | 2022-12-09 | 2022-12-09 | Event extraction method and system based on type perception gated attention mechanism |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN115577112B (en) |

Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN112307761A (en) * | 2020-11-19 | 2021-02-02 | 新华智云科技有限公司 | Event extraction method and system based on attention mechanism |

| CN113705218A (en) * | 2021-09-03 | 2021-11-26 | 四川大学 | Event element gridding extraction method based on character embedding, storage medium and electronic device |

| CN115238685A (en) * | 2022-09-23 | 2022-10-25 | 华南理工大学 | Combined extraction method for building engineering change events based on position perception |

Family Cites Families (9)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US10565229B2 (en) * | 2018-05-24 | 2020-02-18 | People.ai, Inc. | Systems and methods for matching electronic activities directly to record objects of systems of record |

| US10186275B2 (en) * | 2017-03-31 | 2019-01-22 | Hong Fu Jin Precision Industry (Shenzhen) Co., Ltd. | Sharing method and device for video and audio data presented in interacting fashion |

| CN109299470B (en) * | 2018-11-01 | 2024-02-09 | 成都数联铭品科技有限公司 | Method and system for extracting trigger words in text bulletin |

| CN113239142B (en) * | 2021-04-26 | 2022-09-23 | 昆明理工大学 | Trigger-word-free event detection method fused with syntactic information |

| CN115292568B (en) * | 2022-03-02 | 2023-11-17 | 内蒙古工业大学 | Civil news event extraction method based on joint model |

| CN114298053B (en) * | 2022-03-10 | 2022-05-24 | 中国科学院自动化研究所 | A joint event extraction system based on the fusion of feature and attention mechanism |

| CN114648016B (en) * | 2022-03-29 | 2025-02-11 | 河海大学 | An event argument extraction method based on event element interaction and label semantic enhancement |

| CN115392248A (en) * | 2022-06-22 | 2022-11-25 | 北京航空航天大学 | A Method for Event Extraction Based on Context and Graph Attention |

| CN115168541B (en) * | 2022-07-13 | 2025-08-26 | 山西大学 | Text event extraction method and system based on frame semantic mapping and type awareness |

-

2022

- 2022-12-09 CN CN202211576463.3A patent/CN115577112B/en active Active

Patent Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN112307761A (en) * | 2020-11-19 | 2021-02-02 | 新华智云科技有限公司 | Event extraction method and system based on attention mechanism |

| CN113705218A (en) * | 2021-09-03 | 2021-11-26 | 四川大学 | Event element gridding extraction method based on character embedding, storage medium and electronic device |

| CN115238685A (en) * | 2022-09-23 | 2022-10-25 | 华南理工大学 | Combined extraction method for building engineering change events based on position perception |

Also Published As

| Publication number | Publication date |

|---|---|

| CN115577112A (en) | 2023-01-06 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Li et al. | A model of coherence based on distributed sentence representation | |

| CN108052547B (en) | Natural language question answering method and system based on question sentence and knowledge graph structure analysis | |

| CN111339269A (en) | Knowledge graph question-answer training and application service system with automatically generated template | |

| CN108170816A (en) | A kind of intelligent vision Question-Answering Model based on deep neural network | |

| CN113779220A (en) | A Mongolian multi-hop question answering method based on three-channel cognitive graph and graph attention network | |

| CN114265937A (en) | Intelligent classification analysis method and system of scientific and technological information, storage medium and server | |

| US20210312133A1 (en) | Word vector-based event-driven service matching method | |

| CN103207860A (en) | Method and device for extracting entity relationships of public sentiment events | |

| CN109710744A (en) | A kind of data matching method, device, equipment and storage medium | |

| CN114896985B (en) | A humorous text automatic generation method, system, medium, device and terminal | |

| Guo et al. | Divert more attention to vision-language object tracking | |

| CN108846000A (en) | A kind of common sense semanteme map construction method and device based on supernode and the common sense complementing method based on connection prediction | |

| CN109117891A (en) | It merges social networks and names across the social media account matching process of feature | |

| CN113158673A (en) | Single document analysis method and device | |

| Fan et al. | Adaptive domain-aware representation learning for speech emotion recognition. | |

| CN115858723B (en) | A method and system for query graph generation in complex knowledge base question answering | |

| CN115577112B (en) | Event extraction method and system based on type perception gated attention mechanism | |

| Wang et al. | A survey of vision and language related multi-modal task | |

| CN119719675B (en) | A multimodal social relationship extraction method based on hypergraph attention neural network | |

| CN106570167A (en) | Knowledge-integrated subject model-based microblog topic detection method | |

| CN118821779B (en) | A method and device for extracting relations based on entity pairs and shortest dependency paths | |

| CN118350379B (en) | Method, device and medium for improving accuracy of natural language processing in knowledge system | |

| CN118569376A (en) | Machine reading understanding event extraction method, device and medium based on template bridging | |

| CN107886942A (en) | A Speech Signal Emotion Recognition Method Based on Locally Penalized Random Spectral Regression | |

| Gaspers et al. | A usage-based model for the online induction of constructions from phoneme sequences |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |