CN108564109B - Remote sensing image target detection method based on deep learning - Google Patents

Remote sensing image target detection method based on deep learning Download PDFInfo

- Publication number

- CN108564109B CN108564109B CN201810235045.5A CN201810235045A CN108564109B CN 108564109 B CN108564109 B CN 108564109B CN 201810235045 A CN201810235045 A CN 201810235045A CN 108564109 B CN108564109 B CN 108564109B

- Authority

- CN

- China

- Prior art keywords

- network

- model

- remote sensing

- image

- target detection

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/21—Design or setup of recognition systems or techniques; Extraction of features in feature space; Blind source separation

- G06F18/214—Generating training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Data Mining & Analysis (AREA)

- Physics & Mathematics (AREA)

- Life Sciences & Earth Sciences (AREA)

- Artificial Intelligence (AREA)

- General Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- Evolutionary Computation (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Evolutionary Biology (AREA)

- Bioinformatics & Computational Biology (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Computational Linguistics (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Health & Medical Sciences (AREA)

- General Health & Medical Sciences (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Image Analysis (AREA)

- Image Processing (AREA)

Abstract

本发明涉及一种基于深度学习的遥感图像目标检测方法,包括:利用遥感图像构建相关数据集:对遥感图像进行分类和标注后,图像数据集及经过标记工作生成的类别标签;搭建基于生成对抗网络的全色锐化模型;搭建基于深度卷积神经网络的目标检测模型,通过反向传播和随机梯度下降等方法对模型进行端到端训练;对构建好的模型进行端到端测试。本发明具有准确性高的优点。

The invention relates to a remote sensing image target detection method based on deep learning. A full-color sharpening model of the network; build a target detection model based on a deep convolutional neural network, and perform end-to-end training on the model through backpropagation and stochastic gradient descent; end-to-end testing of the constructed model. The present invention has the advantage of high accuracy.

Description

Technical Field

The invention relates to the fields of remote sensing image processing, deep learning, pattern recognition and the like, in particular to a method for carrying out panchromatic sharpening processing and target detection on a spectral image by using a generation countermeasure network based on the deep learning.

Background

Due to signal transmission band and imaging sensor storage limitations, most remote sensing satellites provide only Multispectral (MSI) images with high spectral resolution, and Panchromatic (PAN) images with high spatial resolution. The advantages of the two images are complemented, and the images are fused into a fused remote sensing image with clear spatial details and rich spectral information, and the fusion technology is also called as panchromatic sharpening technology.

At present, the mainstream panchromatic sharpening methods in the field of remote sensing include a component replacement method, a multi-scale analysis method and the like. The component replacement method mainly transforms the multispectral image on a color space domain by methods of principal component analysis, Schmidt orthogonalization, intensity, hue, saturation transformation and the like, replaces a spatial information channel of the multispectral image with a full-color image, and obtains a fused image through inverse transformation.

A multi-scale analysis method is based on wavelet transformation, Laplace pyramid, multi-scale geometric analysis and other approaches, a source image is decomposed into a sequence of decomposition coefficients by a multi-resolution analysis tool, then the decomposition coefficients are combined into decomposition coefficients of a fusion image through a certain fusion criterion, and finally the fusion image is obtained through inverse transformation of the multi-resolution analysis tool.

In recent years, based on the appearance of large-scale data and the development of deep neural networks, deep learning methods have become an important research direction in the field of machine learning. Based on deep learning techniques and the ideas of game theory, a Generative Adaptive Networks (GAN) capable of generating high-dimensional samples from low-dimensional features can be introduced for the panchromatic sharpening process, the GAN consisting of a Generative network model and a discriminative network model. The generated model can help to generate related sample data, the discrimination model can judge the truth of the sample, the two are trained simultaneously, the generated model is continuously strengthened, and the generated sample is closer to the real sample more and more through continuous iteration.

As an important application of mode identification in the field of remote sensing, the detection and identification of various multi-scale targets based on remote sensing images is a key technology in the fields of geographic survey, military reconnaissance, accurate strike and the like, how to improve the precision of target detection is always a research hotspot and difficulty in the field of remote sensing application, and the method has important military and civil values. With the rapid development of the high-resolution remote sensing technology, a large-scale high-resolution remote sensing image data set is built, the development of a more intelligent remote sensing image target detection system is possible, and the extraction of target effective features from mass data becomes a key technology for remote sensing image application.

The traditional detection algorithm almost finishes classification and detection work on the basis of a given characteristic. The extracted features and the detection model are used as two important factors for determining the detection effect, and play a vital role on the model. This requires strict requirements on the input features and finding a detection model that matches the features.

However, the above requirements are complex and time-consuming, and depend strongly on the professional knowledge and the characteristics of the data itself, and furthermore, it is difficult to learn an effective classification model from the large-scale data to fully mine the correlation between the large-scale data.

With the development of deep learning methods, it is possible to realize an End-to-End (End-to-End) learning process with raw data as input. The deep artificial neural network has strong characteristic learning capability, the characteristic data obtained by the deep learning model learning has more essential representativeness to the original data, and the target detection model trained by large-scale data and based on the deep learning technology can more extract rich internal information, thereby being beneficial to visualization and classification problem processing. Therefore, based on the convolutional neural network, a method capable of automatically learning features can be designed, the most effective deep features in a large amount of data can be obtained through learning, and the association between the data can be fully mined through establishing a relatively complex network structure.

Disclosure of Invention

The invention aims to provide a remote sensing image target detection method based on deep learning, which is applied to a generation countermeasure network model of panchromatic sharpening and can realize the expansion of information content in a remote sensing image; the deep convolution neural network model applied to target detection is higher in accuracy, better in real-time performance and stronger in robustness. In order to achieve the purpose, the invention adopts the following technical scheme:

a remote sensing image target detection method based on deep learning comprises the following steps:

1) constructing a related data set by using the remote sensing image: after the remote sensing image is classified and labeled, an image data set and a class label generated through labeling work are divided into a training set and a testing set for subsequent network training and testing;

2) constructing a panchromatic sharpening model based on a generation countermeasure network: the generating network G of GAN is a network that can learn the mapping from random noise vector z and image x in the dataset in 1 to the generated sample image y, i.e. G: { x, z } → y, the generation model adopts a U-Net structure with jump connection added, the U-Net structure is divided into a coding layer and a decoding layer, the length and the width of a characteristic diagram are halved in each coding layer, the number of the characteristic layers is halved, the length and the width of the characteristic diagram are doubled in each decoding layer, the number of the characteristic layers is doubled, the characteristic layers and the corresponding coding layers are connected in series through a channel, and then deconvolution processing is carried out; designing a discriminating network model based on a convolutional neural network CNN for classification, the network being designed to have one concatenated layer and four convolutional layers;

3) constructing a target detection model based on a deep convolutional neural network: according to four steps of candidate area generation, feature extraction, classification and position refinement of a target detection algorithm, unifying the steps into a deep network frame, performing parallel operation in a GPU, taking a residual error network ResNet as a basic classification network for feature extraction, wherein the basic classification network comprises a plurality of convolution layers and linear units ReLU, designing an area generation network structure, judging all possible candidate frames on an extracted feature diagram, reducing the marginal cost of a calculation proposal frame through shared convolution, and performing end-to-end training on a model through a back propagation and random gradient descent method;

4) and (3) carrying out end-to-end test on the constructed model: and (3) training a target detection model and testing the model based on the data set constructed in the step (1).

Compared with the prior art, the invention has the advantages that:

the invention provides a method for carrying out panchromatic sharpening processing and then carrying out target detection based on cascade of deep learning methods. In the process of panchromatic sharpening, the GAN can extract high-dimensional deep features implicit in large-scale data by using a deep convolutional neural network, and the structure of the GAN can also reduce the information loss in the convolution process to the maximum extent. The remote sensing image after panchromatic sharpening has clear spatial details and abundant spectral information, has the characteristics of high spatial resolution and high spectral resolution relative to full-color images and spectral images, can improve the abundance of basic utilization information of remote sensing data in data sets, and has practical application significance for detecting small targets due to the improvement of the spatial resolution. The image is directly detected end to end through the trained model, so that the method is more efficient, and the time and the calculation redundancy are lower.

And secondly, different from the traditional method for detecting the target of the existing remote sensing image, the invention innovatively provides a target detection network model based on a deep convolutional neural network. Compared with manual feature extraction such as HOG features, the features can be directly represented by the feature graph obtained after convolution neural network in data, and the feature graph not only contains the class information of an object but also contains the position information of the object due to the fact that the convolution operation has translation and no deformation, so that the classification result and the position regression of the features have better accuracy and stronger universality. According to the method, the area generation network is adopted, the area recommendation is also completed in the network, the whole process from feature extraction to final detection is completed in one network, the speed is improved more, and meanwhile the fitting related problems are solved.

Drawings

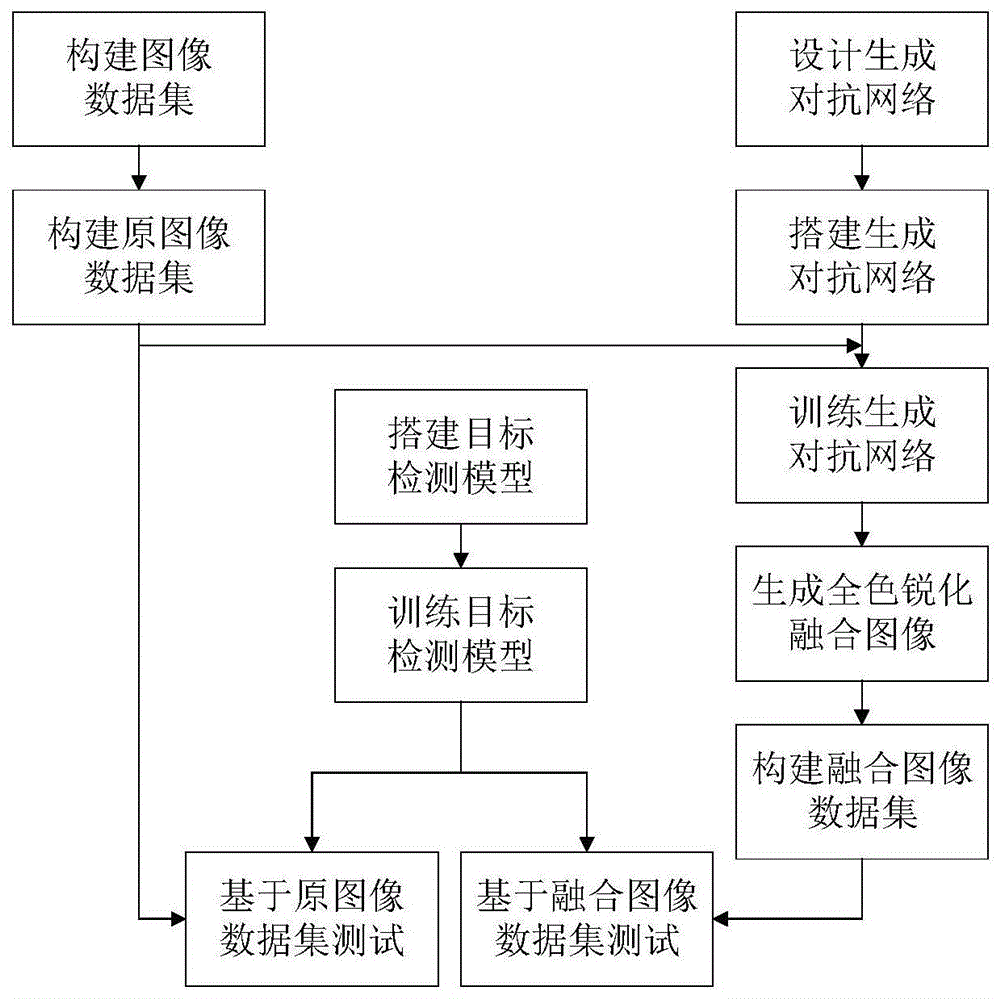

FIG. 1 is a flow chart of the experiment required by the present invention.

Fig. 2 is a schematic diagram of a generation network structure of panchromatic sharpening.

FIG. 3 is a diagram showing the panchromatic sharpening effect of a remote sensing image, (a) panchromatic remote sensing image data; (b) spectral remote sensing image data; (c) fusing remote sensing image data; (d) the invention fuses remote sensing image data.

Fig. 4 is a diagram of a region creation network structure.

Detailed Description

In order to make the technical solution of the present invention clearer, the following further describes a specific embodiment of the present invention. As shown in fig. 1, the present invention is specifically implemented by the following steps:

1. constructing large-scale remote sensing image data set

The invention selects remote sensing image sets such as SpaceNet on AWS, NWPU VHR-10, USGS and the like disclosed by a network to construct a data set of a detection task.

The NWPU VHR-10 dataset is a publicly available ten-kind geospatial object detection dataset. The ten items are airplanes, ships, oil storage tanks, ports, bridges and the like, and comprise high-resolution images and label files of targets and labels marked in the images.

SpaceNet is a large-scale remote sensing image data set hosted in an AWS cloud service platform of Amazon company, is completed by DigitalGlobe, CosmiQ Works and NVIDIA together, comprises an online storage bank of satellite images and marked training data, and is a publicly released satellite image data platform which has high resolution and is specially used for training a machine learning algorithm. Besides, the invention also combines the relevant remote sensing data of a Chinese academy geospatial data cloud platform, the United States Geological Survey (USGS) and Google company to build a data set required by training and testing.

And (3) the image data in the data set is divided into 4: the scale of 1 is divided into a training set and a test set. After the image data sets are classified and labeled, the image data sets and labels are manufactured according to the format of PASCAL VOC challenge match for subsequent network training and testing.

2. Building a panchromatic sharpening model based on generation of countermeasure network

And (2) constructing and training a generation countermeasure neural network for panchromatic sharpening of the remote sensing image based on the related remote sensing database constructed in the step (1), wherein the step is to provide the remote sensing image data with high spatial resolution and spectral resolution for subsequent detection. The generation countermeasure network for panchromatic sharpening is composed of two networks, a generation network and a discrimination network, and the generation network and the discrimination network are generally composed of a multi-layer network containing a convolution layer and/or a full connection layer. Through multiple experimental tests on the network structure with excellent effect, the convolutional neural network based on the U-Net network is constructed as a generating network.

And building a generation network by using a U-Net architecture of a full convolution structure, and building a discrimination network architecture with different reception fields. The convolution kernel in the U-Net network is used for realizing down sampling, so that the redundancy of operation can be reduced, and the abstract characteristics of a target can be extracted to a certain extent; the convolution operation is performed on the image by using a plurality of different convolution kernels, and the response on the different kernels can be obtained as the characteristics of the image. The input image matrix is subjected to convolution kernel (kernel) convolution operation to obtain a new image matrix, namely a feature map.

The units connected thereafter are able to keep the feature map scale unchanged, and in addition, the pooling layer in the network is replaced with a convolutional layer with unchanged feature map scale; the full-connection layer in the network is deleted, the up-sampling of the image is realized by the deconvolution layer, and the characteristics output by the shallow layer convolutional layer and the deep layer convolutional layer can be processed, so that the accuracy of characteristic extraction is improved.

In the generation network described above, a random noise signal z vector and a panchromatic image x in a database are input, and image data y generated by the generation network is used as an input of a discrimination network, that is, G: { x, z } → y, the generated samples cannot be distinguished as false by the discriminant network model after training. And the discrimination network model D can complete the classification problem of the discrimination generation sample as good as possible after training.

The training target of GAN can be expressed by the following loss function formula, where x is the input existing image, y is the output sample image, and z is the random noise vector:

the generation model and the discriminant model both adopt the structure of convolutional layer-batch normalization-linear unit, GAN processes the detailed part of high-frequency structure information in the image, and in the training process, the generation model minimizes the target, and the discriminant model maximizes the target, i.e. the discriminant model maximizes the target

G*=argminGmaxDLcGAN(G,D)

During the generation of the final sample image, the input panchromatic image and the output fused image have the same underlying structure, sharing the location of the salient edges. In order to enable the generative model to obtain the information, skip connection is added to the generative model, and the overall structure of U-Net is adopted.

U-Net is a full convolutional structure that adds a skip link between the corresponding layers (layers with the same size of feature map) of the encoding module and the decoding module based on the traditional encoder-decoder architecture.

The U-Net network is divided into two parts, namely an encoding layer (eight layers in total) and a decoding layer (eight layers in total), wherein the length and the width of a feature map (feature map) are reduced by half in each encoding layer, the number of feature layers is increased by half, the length and the width of the feature map are doubled in each decoding layer, the number of the feature layers is increased and doubled, namely, the feature maps and the corresponding encoding layers are connected in series through channels, and then deconvolution processing is carried out.

The image operation is carried out on the periphery of the input image, and the number of the convolution layers is designed to be 20, 4 times of down sampling and 4 times of up sampling. Specifically, for an n-th layer network, the invention adds jump connection between each i-th layer and the n-i-th layer to connect all channels in the i-th layer and the n-i-th layer.

A discriminative network model is designed based on a Convolutional Neural Network (CNN) for classification. Training order of discriminant networks before the network is generated, the discriminant model may actually act as its loss function for the generated model, and thus the discriminant is more fully trained than the generator to provide the correct goal for convergence of the generator. The network is designed to contain one concatenation layer, and four convolutional layers. The CNN of the parameter design is reduced, only the authenticity of each block in the generated fusion image is classified, the network is rolled on the image, and the average value of all responses is made to provide the final output of D.

3. And constructing a target detection model based on the deep convolutional neural network. Based on deep learning, the neural network model with multiple hidden layers is constructed, so that more useful characteristics can be learned from large-scale training data, and the accuracy of classification or prediction is finally improved. In order to realize high precision and high adaptability of remote sensing target detection, the invention adopts a target detection network structure of a basic feature extraction network, a regional generation network and a classification network to construct a detection network model.

The detection network algorithm based on the deep network designed by the invention has the following implementation steps:

(1) inputting a panchromatic sharpened remote sensing image;

(2) inputting the whole picture into a convolutional neural network for feature extraction;

(3) generating suggestion windows by using an RPN (resilient packet network), and generating 300 suggestion windows for each picture;

(4) mapping the suggestion window to the last layer of the convolution characteristic graph of the convolution neural network;

(5) generating a feature map with a fixed size for each region of interest through the pooling layer;

(6) and performing combined training on the classification probability and the position regression by using the detection classification probability and the detection frame regression.

The characteristic extraction network adopts a residual error network structure (ResNet), and the deepening of the network structure and the remarkable improvement of the classification effect are realized through the residual error network. Compared with the traditional convolutional neural network such as VGG (convolutional gas gradient generator) the complexity of the residual error network is reduced, the required parameter reduction can be deeper, and the problem of gradient dispersion cannot occur. A deep convolutional residual network is a mapping to learn the input to (output-input), thereby obtaining a priori information whose output consists of input parts.

Firstly, a residual network of 18 layers and a residual network of 34 layers are constructed, shortcuts are inserted on the simple network, and the calculation amount can be greatly reduced. The problem of gradient disappearance of deep networks is solved by introducing a shortcut connection between the output and the input instead of simply stacking the networks as in the conventional method.

A Region distribution Network (RPN) can distinguish all possible candidate frames that are relatively sparse currently on a feature map extracted by the ResNet. And (3) by utilizing a mapping mechanism of SPP-Net, the area generation network maps the convolution layer back to the original image according to the points corresponding to one, the network is trained according to different fixed initial scales, and the network is given positive and negative labels according to the accurate coverage degree with the reference standard so as to learn whether a target object exists in the network.

In order to reduce the computational complexity of the area generation network, based on the deep network, the method can realize the sharing of the convolution calculation result, the fixed scale change, the scale change and the sampling mode, and then obtain a target candidate area, namely a candidate window of the characteristics. Firstly, generating 9 candidate windows according to the scale and the aspect ratio, using fixed-size window sliding on the final layer of feature map of the convolution, outputting fixed-size dimensional features by each window, and performing regression coordinate and classification on the candidate 9 targets by each window.

The objective function of the area generation network is the sum of the classification and regression losses. The classification adopts cross entropy, and the regression adopts stable Smooth L1, and the formula can be expressed as follows:

the overall loss function is specifically:

the loss function is divided into two parts corresponding to two branches of the area generation network, namely the classification error of the target or not and the regression error of the detection frame, whereinA smooth L1 function is used which adjusts the learning rate more easily than an error of the form L2. For the confidence of the detection frame, only the candidate window determined to have the target is considered, and the marked coordinates are taken as the target of the confidence. In addition, when calculating the detection frame error, t is t instead of comparing the coordinates of the four cornersx,tY,tW,tHThe specific four-dimensional calculation method is as follows:

tX=(x-xa)/wa,tY=(y-ya)/ha,

tW=log(w/wa),th=log(h/ha),

tX *=(x*-xa)/wa,tY *=(y*-ya)/ha,

tW *=log(w*/wa),th *=log(h*/ha),

during testing, a region of interest (ROI) pooling layer obtains a list of candidate ROIs from the region-generating network, takes all features through the convolutional layer, and performs subsequent classification and regression. By commonly generating a convolutional layer of a proposed window by the area generation network and the detection network, sharing between generation candidates and detection can be realized.

The training process of the network adopts a four-step training method, firstly, a network is generated in an independent training area, and network parameters are loaded by a pre-training model; and secondly, training the detection network independently, and taking the output candidate area of the area generation network in the first step as the input of the detection network. The region generation network outputs a candidate frame, intercepts an original image through the candidate frame, outputs two branches through convolution-pooling for several times and ROI pooling, wherein the two branches are respectively detection classification probability (Softmax Loss) and detection frame regression (Smooth L1 Loss) of target classification. Thirdly, training the region generation network again, fixing the parameters of the public part of the network, and updating the parameters of the unique part of the region generation network; and finally, fine-tuning the detection network structure again according to the result of the area generation network, fixing the parameters of the public part, and only updating the parameters of the unique part of the detection network frame.

4. And cascading the network to carry out end-to-end test. And inputting the panchromatic remote sensing image in the database test set, and performing panchromatic sharpening processing on the image according to the trained generation countermeasure network. And then inputting the data into the constructed detection network model, and evaluating the detection result. For the remote sensing images in the database, on the test data set constructed in the invention content 1, a data set based on the PASCAL VOC competition format is constructed according to the spectral images before and after fusion, and the remote sensing images subjected to panchromatic sharpening have better detection results; the detection experiment is carried out by using the traditional image processing classification method and the depth detection network constructed in the method, and the detection effect of the military and civil targets of the airplane, the ship, the oil storage tank, the bridge and the port in the image is obviously improved compared with the traditional algorithm.

The detection and evaluation method comprises the following steps:

taking the number of ALL pictures tested by the system as ALL, and recording the number of the images in the picture set 1 in which five types of targets to be detected exist as F by the system, wherein the number of the images includes the number of the images which have no targets but have targets and the number of the images which have targets but have correct identification, and the number of the images is respectively recorded as FP and FN, so that F is FP + FN; if the number of images in the picture set 2 in which the system recognizes that no target exists is denoted as T, including the number of correctly recognized pictures which are originally non-target, and the number of pictures which are originally target but have no target, and denoted as TP and TN, respectively, T is TP + TN. The system defines the following indexes according to the actual identification requirement:

Claims (1)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201810235045.5A CN108564109B (en) | 2018-03-21 | 2018-03-21 | Remote sensing image target detection method based on deep learning |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201810235045.5A CN108564109B (en) | 2018-03-21 | 2018-03-21 | Remote sensing image target detection method based on deep learning |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN108564109A CN108564109A (en) | 2018-09-21 |

| CN108564109B true CN108564109B (en) | 2021-08-10 |

Family

ID=63532037

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201810235045.5A Active CN108564109B (en) | 2018-03-21 | 2018-03-21 | Remote sensing image target detection method based on deep learning |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN108564109B (en) |

Families Citing this family (66)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN109377501A (en) * | 2018-09-30 | 2019-02-22 | 上海鹰觉科技有限公司 | Remote sensing images naval vessel dividing method and system based on transfer learning |

| CN109344778A (en) * | 2018-10-10 | 2019-02-15 | 成都信息工程大学 | A method of extracting road information from UAV images based on generative adversarial network |

| US10311321B1 (en) * | 2018-10-26 | 2019-06-04 | StradVision, Inc. | Learning method, learning device using regression loss and testing method, testing device using the same |

| CN109544563B (en) * | 2018-11-12 | 2021-08-17 | 北京航空航天大学 | A passive millimeter wave image human target segmentation method for contraband security inspection |

| CN109543740B (en) * | 2018-11-14 | 2022-07-15 | 哈尔滨工程大学 | A target detection method based on generative adversarial network |

| CN109859110B (en) * | 2018-11-19 | 2023-01-06 | 华南理工大学 | Panchromatic Sharpening Method of Hyperspectral Image Based on Spectral Dimension Control Convolutional Neural Network |

| CN111259710B (en) * | 2018-12-03 | 2022-06-10 | 魔门塔(苏州)科技有限公司 | Parking space structure detection model training method adopting parking space frame lines and end points |

| CN109727207B (en) * | 2018-12-06 | 2022-12-16 | 华南理工大学 | Hyperspectral Image Sharpening Method Based on Spectral Prediction Residual Convolutional Neural Network |

| US10748033B2 (en) | 2018-12-11 | 2020-08-18 | Industrial Technology Research Institute | Object detection method using CNN model and object detection apparatus using the same |

| CN110782398B (en) * | 2018-12-13 | 2020-12-18 | 北京嘀嘀无限科技发展有限公司 | Image processing method, generative countermeasure network system and electronic device |

| CN110110576B (en) * | 2019-01-03 | 2021-03-09 | 北京航空航天大学 | A thermal infrared semantic generation method for traffic scenes based on twin semantic network |

| CN109740677A (en) * | 2019-01-07 | 2019-05-10 | 湖北工业大学 | A Semi-Supervised Classification Method Based on Principal Component Analysis to Improve Generative Adversarial Networks |

| CN109858482B (en) * | 2019-01-16 | 2020-04-14 | 创新奇智(重庆)科技有限公司 | Image key area detection method and system and terminal equipment |

| CN109886114A (en) * | 2019-01-18 | 2019-06-14 | 杭州电子科技大学 | A kind of Ship Target Detection method based on cluster translation feature extraction strategy |

| CN111488893B (en) * | 2019-01-25 | 2023-05-30 | 银河水滴科技(北京)有限公司 | An image classification method and device |

| US10402977B1 (en) * | 2019-01-25 | 2019-09-03 | StradVision, Inc. | Learning method and learning device for improving segmentation performance in road obstacle detection required to satisfy level 4 and level 5 of autonomous vehicles using laplacian pyramid network and testing method and testing device using the same |

| CN109961009B (en) * | 2019-02-15 | 2023-10-31 | 平安科技(深圳)有限公司 | Pedestrian detection method, system, device and storage medium based on deep learning |

| CN109902602B (en) * | 2019-02-16 | 2021-04-30 | 北京工业大学 | Method for identifying foreign matter material of airport runway based on antagonistic neural network data enhancement |

| CN109859143B (en) * | 2019-02-22 | 2020-09-29 | 中煤航测遥感集团有限公司 | Hyperspectral image panchromatic sharpening method, device and electronic device |

| CN109948469B (en) * | 2019-03-01 | 2022-11-29 | 吉林大学 | Automatic inspection robot instrument detection and identification method based on deep learning |

| CN109919123B (en) * | 2019-03-19 | 2021-05-11 | 自然资源部第一海洋研究所 | Oil spill detection method on sea surface based on multi-scale feature deep convolutional neural network |

| CN109949299A (en) * | 2019-03-25 | 2019-06-28 | 东南大学 | An automatic segmentation method for cardiac medical images |

| CN110175548B (en) * | 2019-05-20 | 2022-08-23 | 中国科学院光电技术研究所 | Remote sensing image building extraction method based on attention mechanism and channel information |

| CN110197147B (en) * | 2019-05-23 | 2022-12-02 | 星际空间(天津)科技发展有限公司 | Building example extraction method, device, storage medium and equipment of remote sensing image |

| CN110222622B (en) * | 2019-05-31 | 2023-05-12 | 甘肃省祁连山水源涵养林研究院 | Environment soil detection method and device |

| CN110427793B (en) * | 2019-08-01 | 2022-04-26 | 厦门商集网络科技有限责任公司 | A barcode detection method and system based on deep learning |

| CN110473144B (en) * | 2019-08-07 | 2023-04-25 | 南京信息工程大学 | Image super-resolution reconstruction method based on Laplacian pyramid network |

| CN110472699A (en) * | 2019-08-24 | 2019-11-19 | 福州大学 | A kind of harmful biological motion blurred picture detection method of field of electric force institute based on GAN |

| CN110502654A (en) * | 2019-08-26 | 2019-11-26 | 长光卫星技术有限公司 | A kind of object library generation system suitable for multi-source heterogeneous remotely-sensed data |

| CN110763698B (en) * | 2019-10-12 | 2022-01-14 | 仲恺农业工程学院 | Hyperspectral citrus leaf disease identification method based on characteristic wavelength |

| CN110751699B (en) * | 2019-10-15 | 2023-03-10 | 西安电子科技大学 | Color reconstruction method of optical remote sensing image based on convolutional neural network |

| CN110929618B (en) * | 2019-11-15 | 2023-06-20 | 国网江西省电力有限公司电力科学研究院 | Potential safety hazard detection and assessment method for power distribution network crossing type building |

| CN111026899A (en) * | 2019-12-11 | 2020-04-17 | 兰州理工大学 | Product generation method based on deep learning |

| CN111274865B (en) * | 2019-12-14 | 2023-09-19 | 深圳先进技术研究院 | A remote sensing image cloud detection method and device based on fully convolutional neural network |

| CN111127416A (en) * | 2019-12-19 | 2020-05-08 | 武汉珈鹰智能科技有限公司 | An automatic detection method for surface defects of concrete structures based on computer vision |

| CN111027509B (en) * | 2019-12-23 | 2022-02-11 | 武汉大学 | Hyperspectral image target detection method based on double-current convolution neural network |

| CN111210483B (en) * | 2019-12-23 | 2023-04-18 | 中国人民解放军空军研究院战场环境研究所 | Simulated satellite cloud picture generation method based on generation of countermeasure network and numerical mode product |

| CN111161250B (en) * | 2019-12-31 | 2023-05-26 | 南遥科技(广东)有限公司 | A method and device for detecting dense houses in multi-scale remote sensing images |

| CN111368843B (en) * | 2020-03-06 | 2022-06-10 | 电子科技大学 | Method for extracting lake on ice based on semantic segmentation |

| CN111428781A (en) * | 2020-03-20 | 2020-07-17 | 中国科学院深圳先进技术研究院 | Remote sensing image feature classification method and system |

| CN111428678B (en) * | 2020-04-02 | 2023-06-23 | 山东卓智软件股份有限公司 | Method for generating remote sensing image sample expansion of countermeasure network under space constraint condition |

| CN111553212B (en) * | 2020-04-16 | 2022-02-22 | 中国科学院深圳先进技术研究院 | Remote sensing image target detection method based on smooth frame regression function |

| CN111612066B (en) * | 2020-05-21 | 2022-03-08 | 成都理工大学 | Remote sensing image classification method based on depth fusion convolutional neural network |

| CN111611968B (en) * | 2020-05-29 | 2022-02-01 | 中国科学院西北生态环境资源研究院 | Processing method of remote sensing image and remote sensing image processing model |

| CN111832404B (en) * | 2020-06-04 | 2021-05-18 | 中国科学院空天信息创新研究院 | A small sample remote sensing feature classification method and system based on feature generation network |

| CN111696066B (en) * | 2020-06-13 | 2022-04-19 | 中北大学 | Multi-band image synchronous fusion and enhancement method based on improved WGAN-GP |

| CN111796310B (en) * | 2020-07-02 | 2024-02-02 | 武汉北斗星度科技有限公司 | Beidou GNSS-based high-precision positioning method, device and system |

| TWI740565B (en) * | 2020-07-03 | 2021-09-21 | 財團法人國家實驗研究院國家高速網路與計算中心 | Method for improving remote sensing image quality, computer program product and system thereof |

| CN111798662A (en) * | 2020-07-31 | 2020-10-20 | 公安部交通管理科学研究所 | Urban traffic accident early warning method based on space-time gridding data |

| CN112100908B (en) * | 2020-08-31 | 2024-03-22 | 西安工程大学 | Clothing design method for generating countermeasure network based on multi-condition deep convolution |

| CN112184554B (en) * | 2020-10-13 | 2022-08-23 | 重庆邮电大学 | Remote sensing image fusion method based on residual mixed expansion convolution |

| CN112926383B (en) * | 2021-01-08 | 2023-03-03 | 浙江大学 | Automatic target identification system based on underwater laser image |

| CN112529114B (en) * | 2021-01-13 | 2021-06-29 | 北京云真信科技有限公司 | Target information identification method based on GAN, electronic device and medium |

| CN113012069B (en) * | 2021-03-17 | 2023-09-05 | 中国科学院西安光学精密机械研究所 | Optical remote sensing image quality improvement method combined with deep learning in wavelet transform domain |

| CN113989478B (en) * | 2021-10-13 | 2025-04-04 | 北京科技大学设计研究院有限公司 | A steel plate character detection method and device based on multi-level network fusion |

| CN114241274B (en) * | 2021-11-30 | 2023-04-07 | 电子科技大学 | Small target detection method based on super-resolution multi-scale feature fusion |

| CN114241339A (en) * | 2022-02-28 | 2022-03-25 | 山东力聚机器人科技股份有限公司 | Remote sensing image recognition model, method and system, server and medium |

| CN114708494B (en) * | 2022-03-04 | 2025-05-09 | 中国农业科学院农业信息研究所 | A method and system for identifying rural homestead buildings |

| CN114973021A (en) * | 2022-06-15 | 2022-08-30 | 北京鹏鹄物宇科技发展有限公司 | Satellite image data processing system and method based on deep learning |

| CN115512098B (en) * | 2022-09-26 | 2023-09-01 | 重庆大学 | Bridge electronic inspection system and inspection method |

| CN115906624A (en) * | 2022-11-11 | 2023-04-04 | 湖南航天远望科技有限公司 | Hazardous chemical gas spectrum generation method, terminal equipment and storage medium |

| CN115689947B (en) * | 2022-12-30 | 2023-05-26 | 杭州魔点科技有限公司 | Image sharpening method, system, electronic device and storage medium |

| CN117058009B (en) * | 2023-06-21 | 2025-06-27 | 西北工业大学深圳研究院 | Full-color sharpening method based on conditional diffusion model |

| CN116958790A (en) * | 2023-07-19 | 2023-10-27 | 昆明船舶设备研究试验中心(中国船舶集团有限公司七五〇试验场) | Agile image target detection method based on multiple parallel computing |

| CN117237334B (en) * | 2023-11-09 | 2024-03-26 | 江西联益光学有限公司 | Deep learning-based method for detecting stray light of mobile phone lens |

| CN118262245B (en) * | 2024-05-28 | 2024-09-03 | 山东锋士信息技术有限公司 | River and lake management violation problem remote sensing monitoring method based on Laplace and similarity |

Citations (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104268847A (en) * | 2014-09-23 | 2015-01-07 | 西安电子科技大学 | Infrared light image and visible light image fusion method based on interactive non-local average filtering |

Family Cites Families (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US8737733B1 (en) * | 2011-04-22 | 2014-05-27 | Digitalglobe, Inc. | Hyperspherical pan sharpening |

| US8699790B2 (en) * | 2011-11-18 | 2014-04-15 | Mitsubishi Electric Research Laboratories, Inc. | Method for pan-sharpening panchromatic and multispectral images using wavelet dictionaries |

-

2018

- 2018-03-21 CN CN201810235045.5A patent/CN108564109B/en active Active

Patent Citations (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104268847A (en) * | 2014-09-23 | 2015-01-07 | 西安电子科技大学 | Infrared light image and visible light image fusion method based on interactive non-local average filtering |

Non-Patent Citations (1)

| Title |

|---|

| 《Remote sensing image fusion based on two-stream fusion network》;LiuYunhong,et al;《International Conference on Multimedia Modeling》;20180113;1-14 * |

Also Published As

| Publication number | Publication date |

|---|---|

| CN108564109A (en) | 2018-09-21 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN108564109B (en) | Remote sensing image target detection method based on deep learning | |

| CN108537742B (en) | A Panchromatic Sharpening Method for Remote Sensing Images Based on Generative Adversarial Networks | |

| CN110472627B (en) | An end-to-end SAR image recognition method, device and storage medium | |

| Peng et al. | Convolutional transformer-based few-shot learning for cross-domain hyperspectral image classification | |

| CN110929607B (en) | Remote sensing identification method and system for urban building construction progress | |

| CN111291675B (en) | Deep learning-based hyperspectral ancient painting detection and identification method | |

| Lin et al. | Active-learning-incorporated deep transfer learning for hyperspectral image classification | |

| CN111368896A (en) | A classification method of hyperspectral remote sensing images based on dense residual 3D convolutional neural network | |

| CN109146831A (en) | Remote sensing image fusion method and system based on double branch deep learning networks | |

| CN107871119A (en) | A kind of object detection method learnt based on object space knowledge and two-stage forecasting | |

| CN108229551B (en) | Hyperspectral remote sensing image classification method based on compact dictionary sparse representation | |

| Qin et al. | HTD-TS 3: Weakly supervised hyperspectral target detection based on transformer via spectral–spatial similarity | |

| Li et al. | HTDFormer: Hyperspectral target detection based on transformer with distributed learning | |

| CN119131088B (en) | Infrared image weak and small target detection tracking method based on light hypergraph network | |

| CN119229278B (en) | Remote sensing image rotation small target detection method fusing cognitive characteristics | |

| Zheng et al. | Tuning a SAM-based model with multicognitive visual adapter to remote sensing instance segmentation | |

| CN117671666A (en) | A target recognition method based on adaptive graph convolutional neural network | |

| Chen et al. | Class-aware domain adaptation for coastal land cover mapping using optical remote sensing imagery | |

| CN115690549B (en) | Target detection method for realizing multi-dimensional feature fusion based on parallel interactive architecture model | |

| CN118552843A (en) | Hyperspectral image offset label learning method based on heterogeneous network cross disambiguation | |

| CN113705731A (en) | End-to-end image template matching method based on twin network | |

| CN117876782A (en) | A multi-scale feature interaction network implementation method for processing dual-temporal remote sensing image changes | |

| Xiao et al. | Robust Land Cover Classification with Local–Global Information Decoupling to Address Remote Sensing Anomalous Data | |

| CN109447009B (en) | Hyperspectral image classification method based on subspace nuclear norm regularization regression model | |

| Duan et al. | Buildings extraction from remote sensing data using deep learning method based on improved U-Net network |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |