Disclosure of Invention

The invention aims to overcome the defects and shortcomings of the prior art, provides a cotton pest three-dimensional monitoring method and system based on a small unmanned aerial vehicle cluster, overcomes the defect that the traditional unmanned aerial vehicle cannot acquire information of middle and lower layers of cotton in remote sensing, and can monitor pest information of the upper, middle and lower layers of cotton simultaneously, thereby objectively and comprehensively evaluating pest stress degree suffered by the cotton.

In order to achieve the purpose, the technical scheme provided by the invention is as follows:

the cotton pest stereo monitoring method based on the small unmanned aerial vehicle cluster comprises the following steps:

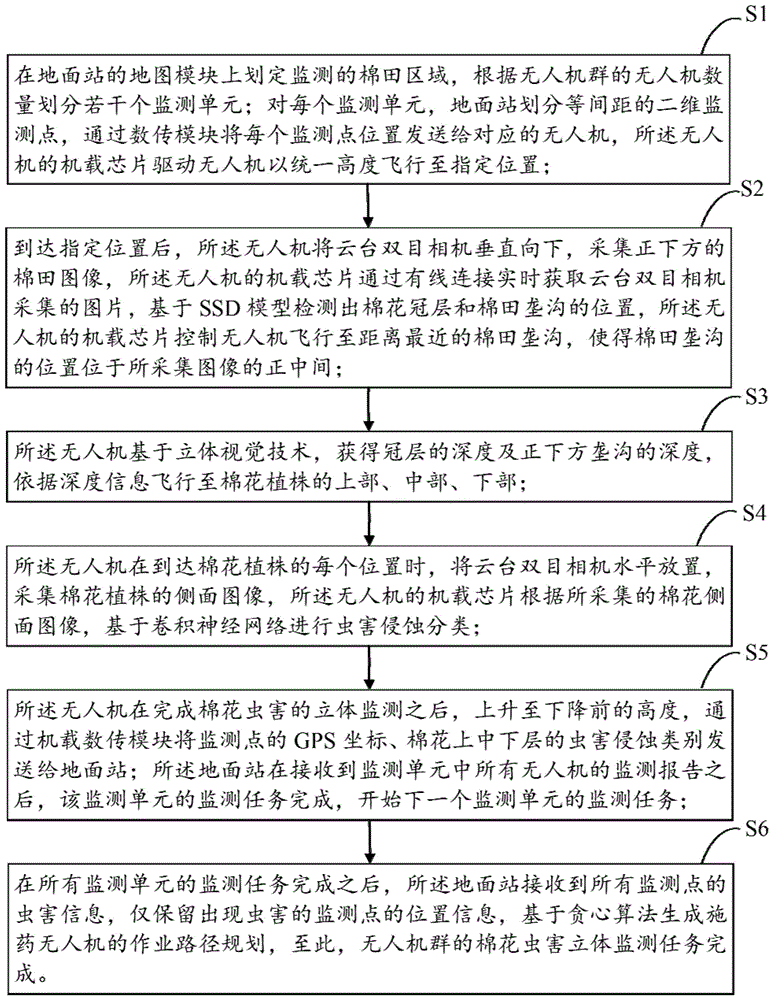

s1, defining a monitored cotton field area on a map module of the ground station, and dividing a plurality of monitoring units according to the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster; dividing two-dimensional monitoring points at equal intervals for each monitoring unit and the ground station, sending the position of each monitoring point to a corresponding unmanned aerial vehicle through a data transmission module, and driving the unmanned aerial vehicle to fly to a designated position at a uniform height by an airborne chip of the unmanned aerial vehicle;

s2, after the designated position is reached, the unmanned aerial vehicle vertically downwards enables the tripod head binocular camera to collect cotton field images right below, an airborne chip of the unmanned aerial vehicle acquires the images collected by the tripod head binocular camera in real time through wired connection, the positions of a cotton canopy and a cotton field furrow are detected based on an SSD model, and the airborne chip of the unmanned aerial vehicle controls the unmanned aerial vehicle to fly to the cotton field furrow closest to the acquired image, so that the cotton field furrow is located right in the middle of the acquired image;

s3, the unmanned aerial vehicle obtains the depth of the canopy and the depth of the furrow under the canopy based on a stereoscopic vision technology, and flies to the upper part, the middle part and the lower part of the cotton plant according to the depth information;

s4, when the unmanned aerial vehicle reaches each position of a cotton plant, horizontally placing a tripod head binocular camera, collecting a side image of the cotton plant, and carrying out insect attack classification on the basis of a convolutional neural network by an airborne chip of the unmanned aerial vehicle according to the collected side image of the cotton plant;

s5, after the unmanned aerial vehicle completes three-dimensional monitoring of the cotton pests, the unmanned aerial vehicle ascends to the height before descending, and GPS coordinates of monitoring points and pest erosion categories of upper, middle and lower layers of cotton are sent to a ground station through an airborne data transmission module; after the ground station receives the monitoring reports of all the unmanned aerial vehicles in the monitoring unit, the monitoring task of the monitoring unit is completed, and the monitoring task of the next monitoring unit is started;

s6, after the monitoring tasks of all the monitoring units are completed, the ground station receives the pest information of all the monitoring points, only the position information of the monitoring points with pests is reserved, and the operation path plan of the pesticide application unmanned aerial vehicle is generated based on the greedy algorithm, so that the cotton pest three-dimensional monitoring task of the unmanned aerial vehicle cluster is completed.

Further, the step S1 includes the steps of:

s101, defining a whole cotton field area to be monitored on a map module of a ground station, and calculating the width and the height of the cotton field area; wherein the cotton field area to be monitored only comprises a rectangular area which is common in aviation plant protection operation;

s102, calculating the area of a monitoring unit by the ground station according to the number of the unmanned aerial vehicles of the small unmanned aerial vehicle cluster and the monitoring range of a single unmanned aerial vehicle; the area of the monitoring unit is calculated according to the following formula:

wunit=a×wplane

hunit=b×hplane

in the formula, wunitAnd hunitIs to monitor the width and height, w, of the cellplaneAnd hplaneThe width and the height of a monitoring range of a single unmanned aerial vehicle, and a multiplied by b is the number of the unmanned aerial vehicles of the unmanned aerial vehicle cluster; wherein, a represents the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster in the transverse direction, and b represents the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster in the longitudinal direction; the ground station calculates the number of the monitoring units according to the width and the height of the monitoring units, and the number of the monitoring units is calculated according to the following formula:

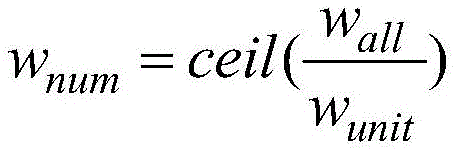

wherein, wnumAnd hnumIs the number of monitoring units in the transverse and longitudinal directions, wallAnd hallThe width and height of the whole cotton field area to be monitored, and the ceil function represents upward rounding operation;

s103, dividing a first monitoring area into a multiplied by b monitoring points at equal intervals on a two-dimensional space by the ground station; the number of the monitoring points is equal to that of the unmanned aerial vehicles of the unmanned aerial vehicle cluster, and each monitoring point corresponds to one unmanned aerial vehicle; the ground station sends the position of the monitoring point to the corresponding unmanned aerial vehicle based on the data transmission module; the airborne chip of the unmanned aerial vehicle receives position information of the monitoring point according to the airborne data transmission module, and drives the unmanned aerial vehicle to fly to a designated position at a uniform height.

Further, the step S2 includes the steps of:

s201, obtaining an SSD model for detecting a cotton canopy and a cotton field furrow, and deploying the SSD model on each unmanned aerial vehicle before a task starts; wherein, the step of obtaining an SSD model for cotton canopy and cotton field furrow detection comprises:

s2011, designing a network structure of an SSD model based on an application scene, wherein the SSD model comprises feature extraction, prior frame matching and non-maximum suppression; in the confidence calculation of the prior frame matching, the number of the prediction categories needs to be set to be 2;

s2012, collecting unmanned aerial vehicle images of the cotton field blocks, and marking positions and types of cotton canopies and cotton field furrows to form a data set for detecting the cotton canopies and the cotton field furrows; using the data set for SSD model training, calculating a position error and a confidence error, and reversely adjusting a parameter value of an SSD model;

s202, after the unmanned aerial vehicle reaches a monitoring point position, vertically and downwards collecting images right below a tripod head binocular camera, and transmitting the collected images to an airborne chip through USB (universal serial bus) wired connection; the onboard chip calls the trained SSD model to detect the positions of the cotton canopy and the cotton field furrow;

s203, the unmanned aerial vehicle searches for a cotton furrow position with the nearest distance and controls the unmanned aerial vehicle to fly above the center of the furrow, so that the center position of the cotton furrow is located in a center area of an image of the unmanned aerial vehicle; wherein, the determination basis of the cotton field furrow with the shortest distance is that the distance between the center of the cotton field furrow and the center of the image is shortest; the direction and the amplitude of the position adjustment of the unmanned aerial vehicle are determined based on the relative position relation between the center of the cotton field furrow closest to the unmanned aerial vehicle and the center of the image.

Further, the step S3 includes the steps of:

s301, before a task starts, calibrating a binocular camera of each unmanned aerial vehicle to obtain internal and external parameters of the binocular camera, wherein the internal parameters comprise camera internal parameters, distortion coefficients and position parameters of a left camera and a right camera;

s302, after the unmanned aerial vehicle reaches the position right above a cotton field furrow, acquiring binocular images right below the unmanned aerial vehicle, carrying out distortion correction based on distortion coefficients of cameras, calculating matched pixel pairs in left and right camera images, and calculating depth information of the matched pixel pairs based on internal and external parameters of the cameras;

s303, calculating the average value of the pixel depth of a furrow area right below and the average value of the pixel depth of a cotton canopy adjacent to a furrow right below based on the information of the cotton canopy and the furrow position output by the SSD model, and calculating the depth information of the upper part, the middle part and the lower part of a cotton plant; wherein, the depth information of the upper part, the middle part and the lower part of the cotton plant is calculated by the following formula:

depthup=depthcrown+0.1×(depthditch-depthcrown)

depthmiddle=depthcrown+0.5×(depthditch-depthcrown)

depthdown=depthcrown+0.8×(depthditch-depthcrown)

wherein depth isup、depthmiddle、depthdownRespectively representing the depths of the upper part, the middle part and the bottom of the cotton; depthcrownAnd depthditchRespectively representing the depths of the cotton canopy and the cotton field furrow; before the unmanned aerial vehicle reaches the upper, middle and lower parts of the cotton plant, the unmanned aerial vehicle is controlled by an airborne chip of the unmanned aerial vehicle to sequentially descend by three amplitudes, and the three amplitudes are calculated by the following formula:

margin1=depthcrown+0.1×(depthditch-depthcrown)

margin2=0.4×(depthditch-depthcrown)

margin3=0.3×(depthditch-depthcrown)

wherein margin is1Margin, representing the first descent of the drone2Margin, representing the magnitude of the second descent of the drone3Representing the magnitude of the third descent of the drone.

Further, the step S4 includes the steps of:

s401, acquiring a convolutional neural network for cotton pest erosion classification, and deploying the convolutional neural network on each unmanned aerial vehicle before a task starts; wherein, the step of obtaining a convolutional neural network for cotton pest erosion classification comprises:

s4011, designing a convolutional neural network for cotton pest erosion classification based on an application scene, wherein the convolutional neural network comprises a convolutional layer, an activation layer, a pooling layer and a full-connection layer, and the output of the last full-connection layer is a 1 x 4 matrix which respectively represents normal, light, moderate and severe probability distribution;

s4012, collecting images of the unmanned aerial vehicle on the side face of cotton, and marking according to ground investigation results to form a data set for classifying cotton pests; the data set is used for training a convolutional neural network, the difference between the output of the convolutional neural network and a category label is calculated based on a negative log-likelihood method to serve as an error, and the parameter value of the convolutional neural network is reversely adjusted;

s402, when the unmanned aerial vehicle reaches each position of a cotton plant, horizontally placing a tripod head binocular camera of the unmanned aerial vehicle, collecting a side image of the cotton plant, and transmitting the side image to an airborne chip of the unmanned aerial vehicle in real time through a USB connecting line; the trained convolutional neural network is called by an airborne chip of the unmanned aerial vehicle, and insect pest classification is carried out on the images of the side faces of the cotton plants.

Further, the step S6 includes the steps of:

s601, after the monitoring tasks of all the monitoring units are completed, the ground station receives the pest information of all the monitoring points, ignores the normal monitoring point information of the agricultural condition, only retains the position information of the monitoring points with pests, and forms a monitoring information table as follows:

P1(x1,y1),P2(x2,y2),......,Pn(xn,yn)

wherein n represents the number of reserved monitoring points with insect pests, PnCoordinates representing the nth point, (x)n,yn) Respectively representing longitude and latitude of the nth point;

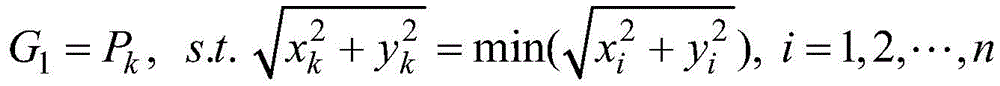

s602, generating an operation path plan of the pesticide application unmanned aerial vehicle based on a greedy algorithm, and firstly determining a first operation point G of the planned path1And determining that the rule is the minimum two-dimensional norm of longitude and latitude as follows:

wherein (x)k,yk) Longitude and latitude (x) representing the next operating point of the planned pathi,yi) Representing the longitude and latitude of the ith point in the monitoring information table, and k is an integer between 1 and n;

when said greedy algorithm will be the first operating point G1After the planning path is added, the corresponding point P is addedkDeleting the monitoring information from the monitoring information table; the greedy algorithm continues to search for the next operation point in the monitoring information table, and the two-dimensional norm according to the coordinate difference between the current point and all the operation points in the planned path is determined to be the minimum, as follows:

wherein (x)

i,y

i) Representing the ith point P in the monitoring information table

iThe longitude and the latitude of the user, respectively,

representing the jth operation point G in the planned path

jT represents the number of monitoring points in the current monitoring information table, s represents the number of operating points in the current planned path, and l is an integer between 1 and s; the greedy algorithm connects the operating point G

s+1Adding the planning path and adding the corresponding point P

kFrom monitored information tablesDeleting; and the greedy algorithm repeatedly executes the process, and when the information points reserved in the monitoring information table are empty, the path planning is finished.

Cotton pest three-dimensional monitoring system based on small-size unmanned aerial vehicle crowd includes:

the cotton canopy and furrow position detection unit is used for detecting the positions of the cotton canopy and the furrow in the unmanned aerial vehicle image; the cotton canopy and furrow position detection unit comprises feature extraction, prior frame matching and non-maximum value inhibition; in the confidence calculation of the prior frame matching, the number of the prediction categories needs to be set to be 2, and the prediction categories respectively correspond to two categories of cotton and non-cotton;

the cotton canopy and furrow depth calculating unit is used for estimating the depths of the furrow right below the unmanned aerial vehicle and the cotton canopy around the furrow; the cotton canopy and furrow depth estimation unit comprises camera calibration, image pixel depth calculation and cotton canopy and furrow depth calculation; the camera calibration is used for obtaining internal and external parameters of the binocular camera; the image pixel depth calculation is to perform distortion correction according to the distortion coefficient of the camera, calculate matched pixel pairs in the left and right camera images, and calculate the depth information of the matched pixel pairs based on the internal and external parameters of the camera; the cotton canopy and furrow depth calculation is to calculate the average value of the pixel depth of the furrow area under the cotton canopy and the furrow position as the cotton furrow depth and calculate the average value of the pixel depth of the cotton canopy adjacent to the furrow under the cotton canopy and the furrow position as the cotton canopy depth;

the cotton pest three-dimensional monitoring unit is used for carrying out image acquisition and pest grade classification on the upper part, the middle part and the lower part of cotton; the unmanned aerial vehicle is located above the cotton furrow position, the descending amplitude required for reaching the upper, middle and lower parts of cotton is calculated by the depth information of a cotton canopy and the furrow position, the image acquisition is obtained by horizontally placing a tripod head binocular camera after the unmanned aerial vehicle reaches a designated part of the cotton and performing image acquisition, and the insect pest grade classification is completed by calling a pre-trained convolutional neural network by an airborne chip;

the operation path planning unit is used for generating a traveling path plan of the pesticide application unmanned aerial vehicle on the basis of the monitoring information table according to the energy consumption minimization principle; the monitoring information table comprises position information of all monitoring points with insect pests, and the position information comprises longitudes and latitudes of the monitoring points; the travel path planning of the pesticide application unmanned aerial vehicle is obtained by greedy algorithm dynamic planning on the basis of the monitoring information table, and the minimum energy consumption of the unmanned aerial vehicle can be realized on the premise of ensuring that all pest positions are traversed.

Compared with the prior art, the invention has the following advantages and beneficial effects:

the invention is based on the small unmanned aerial vehicle cluster, insect pest monitoring is carried out on different parts of cotton, so that insect pest stress information suffered by the cotton is obtained. Guiding the unmanned aerial vehicle to fly to the furrow position among the cotton plants by a monocular vision technology; based on binocular vision technology, the depth of canopy and furrow is measured, and unmanned aerial vehicle is guided to descend to the upper portion, middle part, the lower part of cotton to can carry out image acquisition and insect pest analysis at the different positions of cotton. The technical process of the invention can overcome the defect that the traditional unmanned aerial vehicle can not obtain the information of the middle and lower parts of the cotton, and can comprehensively and objectively evaluate the insect pest stress degree suffered by the cotton; the invention can ensure the operation efficiency of the detection system and can quickly and comprehensively collect and analyze the insect pest stress information suffered by the cotton because the invention carries out monitoring operation based on the small unmanned aerial vehicle group. In a word, the invention can effectively monitor the insect pest information in the middle and at the bottom of the cotton in a close range, avoids the limitation that the traditional unmanned aerial vehicle cannot collect the insect pest information in the middle and at the lower layers of the cotton in a remote sensing way, can quickly and effectively monitor the insect pest distribution condition in a large-range cotton field, and provides decision information for the precise pesticide application operation of the plant protection unmanned aerial vehicle.

Detailed Description

The present invention will be further described with reference to the following specific examples.

Referring to fig. 1, the three-dimensional monitoring method for cotton pests based on a small unmanned aerial vehicle cluster provided by the embodiment includes the following steps:

s1, defining a monitored cotton field area on a map module of the ground station, and dividing a plurality of monitoring units according to the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster; dividing two-dimensional monitoring points at equal intervals for each monitoring unit and the ground station, sending the position of each monitoring point to a corresponding unmanned aerial vehicle through a data transmission module, and driving the unmanned aerial vehicle to fly to a designated position at a uniform height by an airborne chip of the unmanned aerial vehicle; which comprises the following steps:

s101, defining a whole cotton field area to be monitored on a map module of a ground station, and calculating the width and the height of the cotton field area; wherein the cotton field area to be monitored only comprises a rectangular area which is common in aviation plant protection operation;

s102, calculating the area of a monitoring unit by the ground station according to the number of the unmanned aerial vehicles of the small unmanned aerial vehicle cluster and the monitoring range of a single unmanned aerial vehicle; the area of the monitoring unit is calculated according to the following formula:

wunit=a×wplane

hunit=b×hplane

in the formula, wunitAnd hunitIs to monitor the width and height, w, of the cellplaneAnd hplaneThe width and the height of a monitoring range of a single unmanned aerial vehicle, and a multiplied by b is the number of the unmanned aerial vehicles of the unmanned aerial vehicle cluster; wherein, a represents the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster in the transverse direction, and b represents the number of unmanned aerial vehicles of the unmanned aerial vehicle cluster in the longitudinal direction; the ground station calculates the number of the monitoring units according to the width and the height of the monitoring units, and the number of the monitoring units is calculated according to the following formula:

wherein, wnumAnd hnumIs the number of monitoring units in the transverse and longitudinal directions, wallAnd hallThe width and height of the whole cotton field area to be monitored, and the ceil function represents upward rounding operation;

s103, dividing a first monitoring area into a multiplied by b monitoring points at equal intervals on a two-dimensional space by the ground station; the number of the monitoring points is equal to that of the unmanned aerial vehicles of the unmanned aerial vehicle cluster, and each monitoring point corresponds to one unmanned aerial vehicle; the ground station sends the position of the monitoring point to the corresponding unmanned aerial vehicle based on the data transmission module; the airborne chip of the unmanned aerial vehicle receives position information of the monitoring point according to the airborne data transmission module, and drives the unmanned aerial vehicle to fly to a designated position at a uniform height.

S2, after the designated position is reached, the unmanned aerial vehicle vertically downwards enables the tripod head binocular camera to collect cotton field images right below, an airborne chip of the unmanned aerial vehicle acquires the images collected by the tripod head binocular camera in real time through wired connection, the positions of a cotton canopy and a cotton field furrow are detected based on an SSD model, and the airborne chip of the unmanned aerial vehicle controls the unmanned aerial vehicle to fly to the cotton field furrow closest to the acquired image, so that the cotton field furrow is located right in the middle of the acquired image; which comprises the following steps:

s201, obtaining an SSD model for detecting a cotton canopy and a cotton field furrow, and deploying the SSD model on each unmanned aerial vehicle before a task starts; wherein, the step of obtaining an SSD model for cotton canopy and cotton field furrow detection comprises:

s2011, designing a network structure of an SSD model based on an application scene, wherein the SSD model comprises feature extraction, prior frame matching and non-maximum suppression; in the confidence calculation of the prior frame matching, the number of the prediction categories needs to be set to be 2;

s2012, collecting unmanned aerial vehicle images of the cotton field blocks, and marking positions and types of cotton canopies and cotton field furrows to form a data set for detecting the cotton canopies and the cotton field furrows; using the data set for SSD model training, calculating a position error and a confidence error, and reversely adjusting a parameter value of an SSD model;

s202, after the unmanned aerial vehicle reaches a monitoring point position, vertically and downwards collecting images right below a tripod head binocular camera, and transmitting the collected images to an airborne chip through USB (universal serial bus) wired connection; the onboard chip calls the trained SSD model to detect the positions of the cotton canopy and the cotton field furrow;

s203, the unmanned aerial vehicle searches for a cotton furrow position with the nearest distance and controls the unmanned aerial vehicle to fly above the center of the furrow, so that the center position of the cotton furrow is located in a center area of an image of the unmanned aerial vehicle; wherein, the determination basis of the cotton field furrow with the shortest distance is that the distance between the center of the cotton field furrow and the center of the image is shortest; the direction and the amplitude of the position adjustment of the unmanned aerial vehicle are determined based on the relative position relation between the center of the cotton field furrow closest to the unmanned aerial vehicle and the center of the image.

S3, the unmanned aerial vehicle obtains the depth of the canopy and the depth of the furrow under the canopy based on a stereoscopic vision technology, and flies to the upper part, the middle part and the lower part of the cotton plant according to the depth information; which comprises the following steps:

s301, before a task starts, calibrating a binocular camera of each unmanned aerial vehicle to obtain internal and external parameters of the binocular camera, wherein the internal parameters comprise camera internal parameters, distortion coefficients and position parameters of a left camera and a right camera;

s302, after the unmanned aerial vehicle reaches the position right above a cotton field furrow, acquiring binocular images right below the unmanned aerial vehicle, carrying out distortion correction based on distortion coefficients of cameras, calculating matched pixel pairs in left and right camera images, and calculating depth information of the matched pixel pairs based on internal and external parameters of the cameras;

s303, calculating the average value of the pixel depth of a furrow area right below and the average value of the pixel depth of a cotton canopy adjacent to a furrow right below based on the information of the cotton canopy and the furrow position output by the SSD model, and calculating the depth information of the upper part, the middle part and the lower part of a cotton plant; wherein, the depth information of the upper part, the middle part and the lower part of the cotton plant is calculated by the following formula:

depthup=depthcrown+0.1×(depthditch-depthcrown)

depthmiddle=depthcrown+0.5×(depthditch-depthcrown)

depthdown=depthcrown+0.8×(depthditch-depthcrown)

wherein depth isup、depthmiddle、depthdownRespectively representing the depths of the upper part, the middle part and the bottom of the cotton; depthcrownAnd depthditchRespectively representing the depths of the cotton canopy and the cotton field furrow; before the unmanned aerial vehicle reaches the upper, middle and lower parts of the cotton plant, the unmanned aerial vehicle is controlled by an airborne chip of the unmanned aerial vehicle to sequentially descend by three amplitudes, and the three amplitudes are calculated by the following formula:

margin1=depthcrown+0.1×(depthditch-depthcrown)

margin2=0.4×(depthditch-depthcrown)

margin3=0.3×(depthditch-depthcrown)

wherein margin is1Margin, representing the first descent of the drone2Margin, representing the magnitude of the second descent of the drone3Representing the magnitude of the third descent of the drone.

S4, when the unmanned aerial vehicle reaches each position of a cotton plant, horizontally placing a tripod head binocular camera, collecting a side image of the cotton plant, and carrying out insect attack classification on the basis of a convolutional neural network by an airborne chip of the unmanned aerial vehicle according to the collected side image of the cotton plant; which comprises the following steps:

s401, acquiring a convolutional neural network for cotton pest erosion classification, and deploying the convolutional neural network on each unmanned aerial vehicle before a task starts; wherein, the step of obtaining a convolutional neural network for cotton pest erosion classification comprises:

s4011, designing a convolutional neural network for cotton pest erosion classification based on an application scene, wherein the convolutional neural network comprises a convolutional layer, an activation layer, a pooling layer and a full-connection layer, and the output of the last full-connection layer is a 1 x 4 matrix which respectively represents normal, light, moderate and severe probability distribution;

s4012, collecting images of the unmanned aerial vehicle on the side face of cotton, and marking according to ground investigation results to form a data set for classifying cotton pests; the data set is used for training a convolutional neural network, the difference between the output of the convolutional neural network and a category label is calculated based on a negative log-likelihood method to serve as an error, and the parameter value of the convolutional neural network is reversely adjusted;

s402, when the unmanned aerial vehicle reaches each position of a cotton plant, horizontally placing a tripod head binocular camera of the unmanned aerial vehicle, collecting a side image of the cotton plant, and transmitting the side image to an airborne chip of the unmanned aerial vehicle in real time through a USB connecting line; the trained convolutional neural network is called by an airborne chip of the unmanned aerial vehicle, and insect pest classification is carried out on the images of the side faces of the cotton plants.

S5, after the unmanned aerial vehicle completes three-dimensional monitoring of the cotton pests, the unmanned aerial vehicle ascends to the height before descending, and GPS coordinates of monitoring points and pest erosion categories of upper, middle and lower layers of cotton are sent to a ground station through an airborne data transmission module; after the ground station receives the monitoring reports of all the unmanned aerial vehicles in the monitoring unit, the monitoring task of the monitoring unit is completed, and the monitoring task of the next monitoring unit is started;

s6, after the monitoring tasks of all the monitoring units are completed, the ground station receives pest information of all the monitoring points, only position information of the monitoring points with pests is reserved, and an operation path plan of the pesticide application unmanned aerial vehicle is generated based on a greedy algorithm, so that the cotton pest three-dimensional monitoring task of the unmanned aerial vehicle cluster is completed; which comprises the following steps:

s601, after the monitoring tasks of all the monitoring units are completed, the ground station receives the pest information of all the monitoring points, ignores the normal monitoring point information of the agricultural condition, only retains the position information of the monitoring points with pests, and forms a monitoring information table as follows:

P1(x1,y1),P2(x2,y2),......,Pn(xn,yn)

wherein n represents a reserved signature of the presence of pestsNumber of measurement points, PnCoordinates representing the nth point, (x)n,yn) Respectively representing longitude and latitude of the nth point;

s602, generating an operation path plan of the pesticide application unmanned aerial vehicle based on a greedy algorithm, and firstly determining a first operation point G of the planned path1And determining that the rule is the minimum two-dimensional norm of longitude and latitude as follows:

wherein (x)k,yk) Longitude and latitude (x) representing the next operating point of the planned pathi,yi) Represents the longitude and latitude of the ith point in the monitoring information table, and k is an integer between 1 and n.

When said greedy algorithm will be the first operating point G1After the planning path is added, the corresponding point P is addedkDeleting the monitoring information from the monitoring information table; the greedy algorithm continues to search for the next operation point in the monitoring information table, and the two-dimensional norm according to the coordinate difference between the current point and all the operation points in the planned path is determined to be the minimum, as follows:

wherein (x)

i,y

i) Representing the ith point P in the monitoring information table

iThe longitude and the latitude of the user, respectively,

representing the jth operation point G in the planned path

jT represents the number of monitoring points in the current monitoring information table, s represents the number of operating points in the current planned path, and l is an integer between 1 and s; the greedy algorithm connects the operating point G

s+1Adding the planning path and adding the corresponding point P

kDeleting the monitoring information from the monitoring information table; the greedy algorithm repeatedly executes the process, and when the information point reserved in the monitoring information table is empty, the greedy algorithm repeatedly executes the processAnd finishing path planning.

Referring to fig. 2, the present embodiment also provides a three-dimensional monitoring system for insect damage to cotton based on a small unmanned aerial vehicle cluster, including:

the cotton canopy and furrow position detection unit is used for detecting the positions of the cotton canopy and the furrow in the unmanned aerial vehicle image; the cotton canopy and furrow position detection unit comprises feature extraction, prior frame matching and non-maximum value inhibition; in the confidence calculation of the prior frame matching, the number of the prediction categories needs to be set to be 2, and the prediction categories respectively correspond to two categories of cotton and non-cotton;

the cotton canopy and furrow depth calculating unit is used for estimating the depths of the furrow right below the unmanned aerial vehicle and the cotton canopy around the furrow; the cotton canopy and furrow depth estimation unit comprises camera calibration, image pixel depth calculation and cotton canopy and furrow depth calculation; the camera calibration is used for obtaining internal and external parameters of the binocular camera; the image pixel depth calculation is to perform distortion correction according to the distortion coefficient of the camera, calculate matched pixel pairs in the left and right camera images, and calculate the depth information of the matched pixel pairs based on the internal and external parameters of the camera; the cotton canopy and furrow depth calculation is to calculate the average value of the pixel depth of the furrow area under the cotton canopy and the furrow position as the cotton furrow depth and calculate the average value of the pixel depth of the cotton canopy adjacent to the furrow under the cotton canopy and the furrow position as the cotton canopy depth;

the cotton pest three-dimensional monitoring unit is used for carrying out image acquisition and pest grade classification on the upper part, the middle part and the lower part of cotton; the unmanned aerial vehicle is located above the cotton furrow position, the descending amplitude required for reaching the upper, middle and lower parts of cotton is calculated by the depth information of a cotton canopy and the furrow position, the image acquisition is obtained by horizontally placing a tripod head binocular camera after the unmanned aerial vehicle reaches a designated part of the cotton and performing image acquisition, and the insect pest grade classification is completed by calling a pre-trained convolutional neural network by an airborne chip;

the operation path planning unit is used for generating a traveling path plan of the pesticide application unmanned aerial vehicle on the basis of the monitoring information table according to the energy consumption minimization principle; the monitoring information table comprises position information of all monitoring points with insect pests, and the position information comprises longitudes and latitudes of the monitoring points; the travel path planning of the pesticide application unmanned aerial vehicle is obtained by greedy algorithm dynamic planning on the basis of the monitoring information table, and the minimum energy consumption of the unmanned aerial vehicle can be realized on the premise of ensuring that all pest positions are traversed.

Referring to fig. 3, a schematic diagram of the level division of the cotton field area to be monitored in this embodiment is shown, the monitoring area is divided into a plurality of monitoring units, and each monitoring unit further includes a plurality of monitoring points. The monitoring area is a whole cotton field area to be monitored, and the monitoring area only comprises a rectangular area which is common in plant protection operation in the embodiment; the monitoring unit is a cotton field area which can be monitored by the small unmanned aerial vehicle group at a time, and the size of the monitoring unit is determined by the number, the flight height, the course, the lateral overlapping rate and the like of the small unmanned aerial vehicle group; the monitoring points are monitoring positions of the small unmanned aerial vehicle, the positions comprise longitude information and latitude information, and the distance between two adjacent monitoring points is determined by the flight altitude, the course, the lateral overlapping rate and the like of the unmanned aerial vehicle. After the monitoring task of a certain monitoring unit is completed, the small unmanned aerial vehicle group simultaneously flies to the next monitoring unit in a translation manner at a uniform height; if the small unmanned aerial vehicle group part is positioned outside the monitoring unit at the edge position of the field, the small unmanned aerial vehicle group part keeps a hovering state, and flies the next monitoring unit after the monitoring unit completes the monitoring task.

The above-mentioned embodiments are merely preferred embodiments of the present invention, and the scope of the present invention is not limited thereto, so that the changes in the shape and principle of the present invention should be covered within the protection scope of the present invention.